15 Data Science Principles We Live By

As our Data Products organization grew in 2019, we felt we had reached a critical mass to better define who we wanted to be and how we wanted to work in 2020 and beyond.

Key takeaways:

Mathieu Bastian, director of data science and machine learning, shares our 15 data science principles. By publishing these principles, we hope to make anyone who is interested in data products understand what’s important to the data scientists and engineers in our teams.

As our Data Products organization grew in 2019, we felt we had reached a critical mass to better define who we wanted to be and how we wanted to work in 2020 and beyond. Over time, our standards and professionalism had grown and it became important to align as a team, consisting of data scientists and backend engineers who are working in different mission teams. We were inspired by popular concepts like the definition of awesome coined by Spotify and our own engineering principles.

We took the Data Products organization to an off-site to Paris in August 2019 to kick-off this collaborative effort and together, we created these principals.

{{Divider}}

{{slider}}

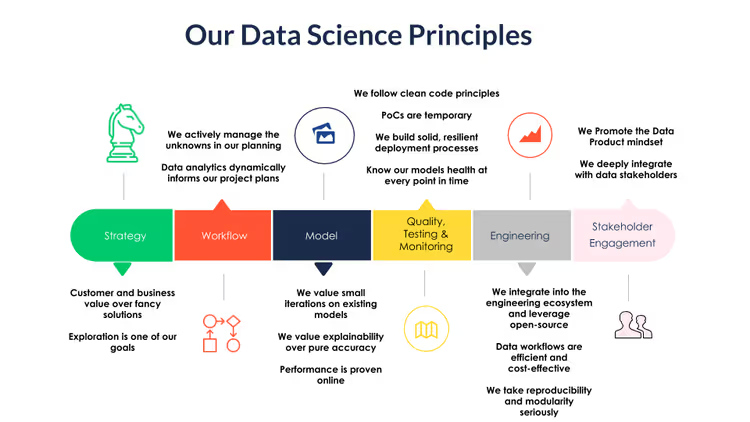

We currently have 15 data science principles categorized into six groups:

- Strategy

- Workflow

- Model

- Quality Testing & Monitoring

- Engineering

- Stakeholder Engagement

We plan to update them regularly.

1. Strategy

The strategy component is directly inspired by what we think drives business impact. Because building data products usually means a serious investment, we want to work on the right problems, the ones that drive business impact and bring value to our customers.

Customer and business value over fancy solutions

We build our strategy and prioritize our work from the customer and business point-of-view first. We do not look for problems to apply techniques on. When prioritizing, we do take the full cost into account - including opportunity cost.

Exploration is one of our goals

We proactively explore new ideas and develop proof of concepts. While success is never certain, we always make sure to extract learnings from our failures.

2. Workflow

Now that we have impactful and relevant problems to work on, the next question is how do we work and iterate on them? In other words, what is our workflow? Our north star is to deliver value as quickly as possible and use data and analytics to define next steps.

We actively manage the unknowns in our planning

Each project stage, including research stages, is time-boxed and characterised by its deliverable. Drawing a straight red line from the start to the end of the project helps us explore the numerous unknowns of a data science project early.

Data analytics dynamically informs our project plans

We continuously reiterate our project plans based on data-driven insights we have mainly gained from previous project stages. We consciously add project stages to collect more of these data insights.

3. Model

The core of our work is, and will always be, the model. A model should, of course, work as expected, but there's more to it. Similar to the difference between code and clean code, a great model should be extensible, robust and if possible explainable.

We value small iterations on existing models

We build upon what is already there. If we have to start from scratch, we try to establish a baseline as quickly as possible.

We value explainability over pure accuracy

We aim for building understandable and explainable algorithms. We proactively build processes and tools to connect with domain experts and stakeholders so that we can identify strange behaviors, encourage feedback, and gather insights.

Performance is proven online

Ultimately, the model value is defined as real world impact and not only by offline evaluation. We define clear and measurable success metrics and only call it a success when measured online.

4. Quality, testing and monitoring

As a mature organization, we care about maintainability and quality assurance. Similar to the You build it, you run it principal in engineering, we are the ones maintaining and operating our models. Therefore, we want to do ourselves a favor by making them robust and automating as much as possible.

We follow clean code principles

We keep up to date on the current clean code principles and follow them accordingly. This ensures readability, reusability and flexibility in our codebase which makes it easy to test, debug and hand over to other team members.

Proof of concepts (POC) are temporary

When testing a new idea, it’s fine to do it as quickly as possible. After the test, this must be taken out of production or built as a production quality product.

We build solid, resilient deployment processes

Our deployment processes should be designed to enable rapid iteration whilst minimizing risk. Where possible, we should build CI/CD pipelines and have automated testing to catch problems before they happen.

5. Engineering

The Data Products craft marries data science with software and data engineering. But what aspects of the engineering craft are important to data scientists? As we spend a lot of our development time with tools like Spark and Airflow, we mostly focus on best practices building scalable data pipelines.

We integrate into the engineering ecosystem and leverage open-source

We build upon our common engineering stack whenever possible and use the same tools engineers do. When possible, we leverage cloud/api services and strongly prefer open-source libraries. We closely align with our infrastructure/devops teams to avoid silos and surprises.

Data workflows are efficient and cost-effective

We design data workflows with an engineering perspective to take advantage of task parallelization and big data tools. Our solutions are built to be maintainable and cost-effective.

We take reproducibility and modularity seriously

Data and model workflows are reproducible, versioned and modular so debugging is facilitated. We leverage configurations and orchestration tools to limit the number of parameters and manual interventions. Whenever possible, we make the data/scores we compute available for other people and teams to leverage and contribute.

6. Stakeholder Engagement

Because data science, and even more so, data products, is still new to many people, we don't expect our product, engineering and business partners to have a mental model of what a great Data Products organization does. That's why we want to take a very proactive approach to engage with them. We also depend on them for data – think hierarchy of needs – so a partnership is the key to success.

We promote the Data Product mindset

We proactively seek out opportunities and push for ideas. We see through our stakeholders needs and propose creative solutions to the business problems they have. Explaining and promoting our way to tackle problems belongs to our core tasks.

We deeply integrate with data stakeholders

Our algorithms use data that is collected by other teams. We partner with our stakeholders to learn what data they collect and what it means, to educate them what we need their data for, to flag if information we need is missing or incorrect, and to find ways to avoid data issues efficiently.