How We Migrated a Microservice Out of Our Monolith

The Travelers UX team is responsible for delivering an enjoyable and consistent traveler experience across all our platforms. The team works on a broad range of topics, including optimizing the Wishlist feature, where travelers can save experiences on our website and app for future booking or planning.

Key takeaways:

Parag Khanna, senior backend engineer, and Ahmed Samir, fellow backend engineer, joined us earlier this year. Parag has been working on moving the existing logic of our website's Wishlist feature from our monolith to the recently released Planner service as a part of the Travelers UX (TUX) team. Ahmed has been building features for the Planner service.

The Travelers UX team is responsible for delivering an enjoyable and consistent traveler experience across all our platforms. The team works on a broad range of topics, including optimizing the Wishlist feature, where travelers can save experiences on our website and app for future booking or planning.

The TUX team also focuses on the customer history page, app download page, and search filters. We recently released a brand new Planner service to our Wishlist feature, which enables travelers not just to save activities on their profile, but also to create customized lists for different types of trips.

On the technical side, there were two reasons for the move: The first is to reap the benefits of microservice architecture. The second is to give autonomy to the TUX team and make them accountable for the development of features, deployments, and scaling.

Today we’ll look at:

- What does the new planner do?

- Why move out of the monolith?

- Challenges we overcame.

- Preparing for migration.

- The tech stack we used.

- Initial phases of development.

- Migration to new Service.

- Setting up analytics.

- Upcoming features.

What does the new Planner service do?

The Planner service is a microservice extracted from our monolith, affectionately named, “Fishfarm,” which was built on a monolithic architecture pattern to support the Wishlist feature for a customer. The service aims to:

- Provide a more interactive interface for travelers to Wishlist items.

- Right now, Wishlist items are activities, but they could eventually include other things like POIs and restaurants.

- Store all the traveler’s Wishlisted items.

- Group Wishlisted items.

- Enable the user to share Wishlisted items.

Why move out of the monolith?

The aim of extracting and building the Planner service out of the monolith was to take advantage of the benefits of microservice architecture like independent deployments, autonomous teams, and using different tech stacks. As the company scales up and the contributors to the monolith increase, it becomes difficult to handle a massive codebase.

This complexity was the case with modular architecture, where each team owned its own modules. Our monolith was written in PHP and our vision was to move towards Java for the development of our new services.

Challenges we overcame

- Old code on old stack.

- Much of the Wishlist feature had been untouched since 2016, with all of the business logic tightly coupled on the monolith.

- Touch (and failure) points.

- Mobile API, Fishfarm Web, Fishfarm AJAX and Fishfarm APIs routed with inconsistent implementation and naming and very low coverage on automated testing.

- Limited monitoring / tracking.

- Basic analytics events and no prior monitoring (if there was an issue with the Wishlist we did not have visibility)

- Not ideal for cross-functional teams nor the customer experience.

- Basic CRUD (Create, Read, Update, Delete), with no change history or soft delete (limiting usefulness for personalization).

Preparing for the migration

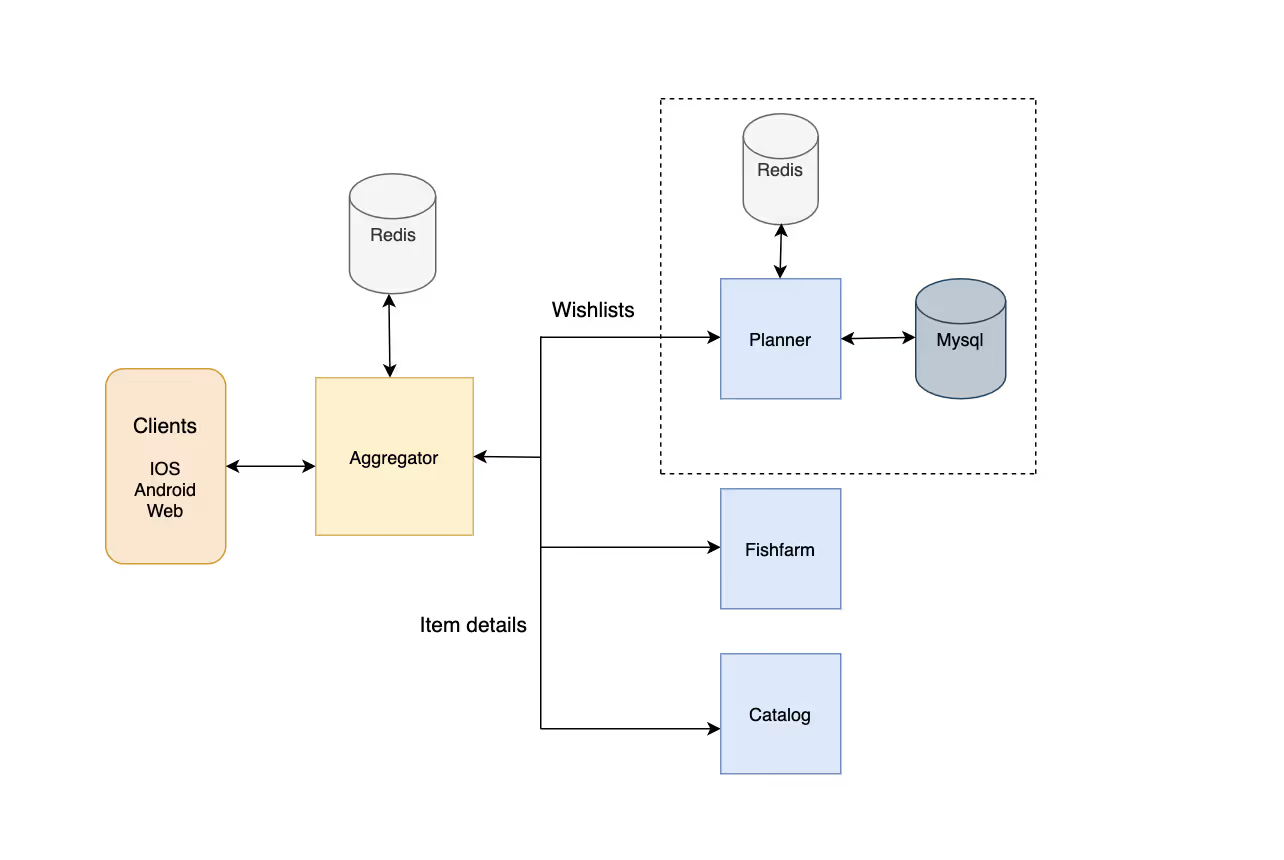

Before starting the development of the Planner feature, there was a design review phase. Javier Laguna, engineering manager for the TUX team, presented the design to the Marketplace team stakeholders. After getting the feedback from everyone, the following architecture for the service was finalized.

As per the architecture, clients would call the aggregation service to get the data from the Planner services. The Planner service will have all the necessary data for the activity, POIs, restaurants, and their hydration will be done in aggregation by calling the Fishfarm monolith* until it's broken into further microservices like catalog, search, and so on. Planner services do CRUD operations to our MySQL database using Hibernate. For caching on the Testing cluster, we use Redis, and for production, we use Elastic cache instance.

*In six months there will be many microservices out of the monolith and we'll be calling those services instead.

The tech stack we used:

Initial phases of development

We work in a cross-functional scrum. So to kick things off, we created mock APIs using Postman and shared it with the client developers, so they’re not blocked until we have all the APIs on the testing cluster.

Two months after we started the project, when we had baseline service ready, clients started using the testing cluster. This helped us figure out the bugs and missing functionalities during the client and service development phase.

For the development of the services we use the service framework built by our internal developer enablement (DEN) team, the structure helps build the Spring Boot project with Open API, Hibernate, Sentry, Datadog, and CI/CD set up in no time. As backend developers, we need to focus on writing the business logic and not worry about setting up everything from scratch.

For the testing of the services, which I consider the most important part of the development of any service, we had three types of tests:

- For Unit tests we used Junit and Mockito.

- For Integration tests we used Flyway for database migration and Embedded Redis to test the Controller.

- Since the response of Planner service was consumed by the aggregator, we wanted to be sure that the aggregator service didn’t break when we changed the contract. To prevent that, we used Spring Cloud Contract to write the contract tests and added the step in our CI flow to avoid the deployment of Planner if it breaks the existing contract with the aggregator.

- For Code Coverage we integrated with Codacy and at the time of the deployment of the service we had the coverage of 78%, we are still working on improving it.

- For Code Style we used a checkstyle library which helps us write readable code.

Our CI/CD flow prevents the deployment of the faulty package and contains the following steps:

- Clone the Planner Repository.

- Compile and run tests.

- Publish stubs for contract tests to nexus.

- Publish codacy code coverage report.

- Build jar file.

- Build docker image

- Clone aggregator service and run contract tests.

- Deploy on production.

- Publish open API specs.

We have almost the same steps on the aggregator service, which helps prevent us from deploying a faulty package.

Migration to new Service

After we got the APIs on the testing cluster, the next challenge was the migration of the data e.g. for 2019 and 2020 [millions of rows] from existing tables to the new Planner service database. The existing logic was the Automatic Grouping feature of the Wishlist items, where the user used to have only one list with all the items being grouped in the service code based on the location. The new Planner service allows users to create lists based on single or multiple locations manually.

In the existing logic, let’s say users add 4 items: 2 from Berlin and 2 from Milan. In the database, it persists only 1 list and 4 items. When a user fetches the list, they get 2 lists based on the location — this is called automatic grouping. So a user can’t have the same list with items from multiple locations.

But the new Planner service allows users to create custom lists with items from various locations based on the user’s own requirements. So, while migrating, we needed to be careful to group items based on different locations and create unique lists based on location and customer, so existing user experiences were not interrupted.

We did a one-time migration process to export the data from our Fishfarm database into the new database of the Planner Service.

We updated all clients to support Feature Toggles (or Feature Flags), which acts as a switch to control if the client communicates with the monolith or the new Planner.

As a failover we wrote the data from the new service to the old tables using the existing APIs. In case there was an issue, we could turn the flag off, and the client could start calling the old endpoints without losing the data.

For Stress testing, we ran a load test on the aggregator as it acted as a gateway to the downstream services. When we deployed on production and clients started calling the service, and the response from Planner was hydrated in the aggregator by calling the Fishfarm and catalog service, it was required to be sure that the aggregator was able to serve the requests.

We started with 10 rps and increased it to 25 rps with latency under 100 ms and no 5xx on a single node using Apache JMeter for random users we have in a CSV file. Considering the traffic in the past and planning was not used by all the users like search and discovery, 25 rps was more than sufficient with the current infrastructure setup for aggregator service.

In terms of infrastructure, our Service Reliability Engineers (SRE) and DEN team built a CI/CD flow to take care of deployment with every merge to master on to the Kubernetes cluster. Considering the COVID-19 situations and the number of requests we were getting, we deployed the service on production. We started with three replicas for the service, a medium instance of Elastic cache and a large instance of Aurora MySQL.

Setting up analytics

We used the analytics pipeline (described in the linked article) to send events. We call the API developed by our analytics team e.g. Collector API with the defined Event based on the user action on the Planner. The data later is analyzed by our product manager to figure out the user behavior and where we can improve the product.

Along with the analytics events, we publish events to Kafka topics which are later consumed by our CRM team to engage or notify users with the items they have added to the Wishlist.

Upcoming features

Two days after officially launching the Planner service, thousands of new lists were created by travelers and even more activities were saved to them. The custom lists reflect real honeymoon trips and genuine bucket list ideas. The feature couldn’t have come at a better time as many travelers are socially distancing at home and will be well-prepared when planning their next experiences.

Until now, we have worked with migrating and building the existing features we had, In the next few months we are moving towards improving what we call the Trip Context, which will allow users to plan a trip, share it with their friends and family members.

For updates on our open positions, check out our Career page.