5 Crucial Questions About A/B Testing Answered

We apply A/B tests if we need to decide whether a variation of the status quo would perform better than the status quo itself with respect to a given measure.

.avif)

Key takeaways:

In today’s post, Alex Weiss, Engineering Manager - Machine Learning, answers 5 key questions on A/B testing.

{{divider}}

Introduction

We take great pride in delighting our customers at GetYourGuide, which is why all our efforts ultimately aim for a better user experience. There are a variety of ways to learn how we can serve our customers most, and A/B testing is one of our favourite analytical tools.

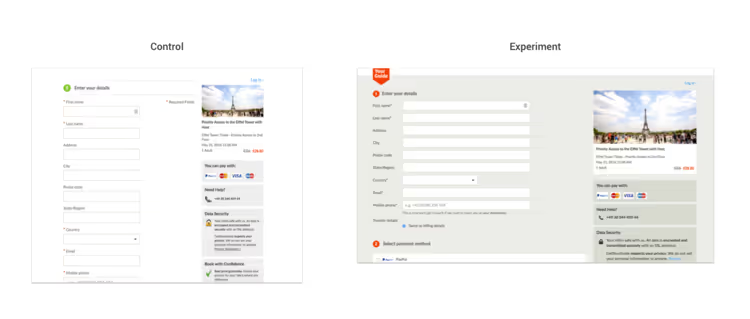

We apply A/B tests if we need to decide whether a variation of the status quo would perform better than the status quo itself with respect to a given measure. A classical example originates from web design: will the share of users clicking on a particular button increase if the button is repositioned on our website? To answer this question, we can run an A/B test comparing the website’s current layout with the new variant. An obvious measure to look at is the share of visitors clicking on the button.

The rise of A/B testing went hand in hand with the rise of the World Wide Web. The technical possibilities and the amount of data allowed for making decisions in a purely statistical, data-driven manner. Google, for example, ran its first A/B test in 2000; it ran 7,000 A/B test in 2011. Nowadays, A/B testing is ubiquitous for front-end developers, product designers and analysts. A simple online search for “ab test calculator” results in numerous websites that analyze an A/B test’s result. At first glance, there is no need for the experimenter to dig deeper into the method’s statistical foundations.

The lack of deeper knowledge about the A/B test’s underlying theory is nevertheless concerning since it raises wrong expectations and promotes incorrect use. In the following post, we shed light on some aspects of A/B tests that are often unknown or mistaken. We motivate our insights by questions our analysts and data scientists are often asked by their colleagues. We do not provide a complete introduction to A/B testing in this article, so if you are unfamiliar with the basic principles and terms, we recommend to read one of the numerous online introductions first.

Q1 When can I apply A/B testing?

You can apply A/B tests if you have (at least) two ideas for how to achieve a particular goal, and you want to know if one idea performs better. The meaning of ideas is broad: you might think about two different website layouts, two different email subjects, or even two different strategies to allocate your marketing budget to different marketing channels.

An A/B test requires that you can apply your ideas in many trials. The meaning of trials depends on the context again. You can show your layouts to the many visitors of your website; you send your emails with different subjects to the members of your mailing list; you can adapt the budget allocation on a daily level. Each single trial must have an outcome and the outcome is often binary, i.e. the expected action happens (success) or does not happen (failure), but it can also be a real number. A user (not) clicking on a button on a website or (not) opening an email is a binary outcome; the revenue resulting from a particular budget allocation on a single day is a real number.

Another requirement is that the two groups A and B should not know about each other. If a visitor has already seen website layout A, they can be biased in their reaction to layout B. If a marketing channel’s performance depends on the overall budget that is invested in it, the individual performances of A and B might be biased in the experiment. If possible, the experimenter should actively prevent such dependencies between the groups, for example by making sure that the same visitor always sees the same layout.

Q2 What is a good metric?

A good metric is usually the average outcome per trial. This is a real number for a real-valued outcome, and it is the success rate, i.e. a number between 0 and 1, for a binary outcome if we encode the failures with 0 and the successes with 1.

Everything beyond this kind of metric is troublesome. We have already seen proposals for A/B tests with very sophisticated success metrics – think of dividing the square root of the number of successes by the number of trials. These metrics might make sense from a business point of view, but whenever you apply a nonlinear function to a value that is some aggregation of several trials, you lose your ability to consider the experiment as series of individual trials. This will make your test’s evaluation much harder (or even impossible) from a statistical point of view.

Q3 Can I consider several metrics in the same A/B test?

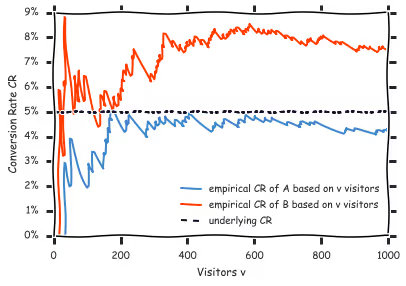

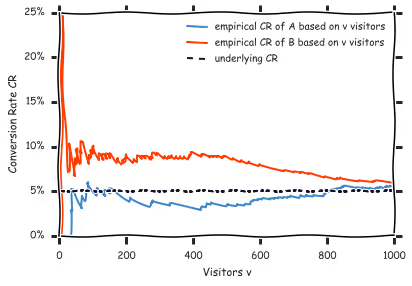

You can… but you should not. No matter how well you setup your A/B test, there is a chance that the test result will mislead you. For example, the test results could suggest that B performs significantly better than A although the performance is actually equal (see figure above). If you look at several metrics at the same time, you multiply the chances for such an error. There are mathematical ways to deal with this issue, the better solution is to focus on one measure. The restriction to one metric will also challenge your own understanding of what you actually want to learn from your test.

Q4 How long do I need to run the test to get significant results?

There is no universal answer to this question. An A/B test can only have two possible results:

- It is very likely that the two versions do NOT perform equally, which is a technical way of saying that one version is most likely more performant than the other one.

- We cannot say that it is very likely that the two versions do NOT perform equally, which is an even more technical way of saying: “Don’t know for now. Might be that they have the same performance, might be that we need to run the experiment longer to detect the difference.”

There are two forces fighting against each other in every A/B test: There is the true performance difference between the two versions in the one corner, and there are random fluctuations – also known as noise – in the other one. The good news is that the random fluctuations become smaller and smaller the more trials we have. The bad news is that the true difference might be so small that even very small random fluctuations still cover it.

Let’s explain with an example of how to find the minimal number of trials. We revisit our website layout again: let’s assume that the current success rate is 1% i.e. 1 visitor out of 100 clicks on the desired button. We must now agree on the minimal uplift that we want to be able to detect with our A/B test. In our example, we have not optimized too much by now, thus we expect an uplift of 5% at least – which translates to an expected success rate of 1%*(1+5%) = 1.05%. We can now apply one of the online A/B calculators to determine the minimal number of trials that are necessary to be able to detect the minimal uplift with respect to some statistical significance. We would need 870K visitors pro version in our example (significance level: 95%). If we would expect an uplift of 10% at least, the number of visitors needed would go down to 200K. If our initial success rate was 50%, we would only need 5.1K visitors to detect an uplift of 5%.

If you have a stable number of daily visitors, you can easily translate the number of trials to a running time. Both stopping criteria are fine.

You can even come up with your own, unique, stopping criterion as long as you accept one limitation: the stopping criterion must not depend in any way on the test’s current performance (see figure above). Though you can find scientific papers about stopping an A/B test early, you should only use those shortcuts if you understand the underlying ideas and potential restrictions to your test’s significance.

Another aspect to consider when determining the running time is the test’s potential dependency on the current context. It is well known that customers of online retailers are often more active on the weekends. If you run a test on conversion rates, you might want to cover a whole weekly cycle at least. An A/B test in December might tell you that the email subject “Buy your Xmas gifts with us!” is significantly better than “Still missing equipment for your summer holidays?”; but it’s a dangerous bet that this result will hold true for the whole year. Also, remember that the dependency on context is not always this obvious.

Q5 How can I show with an A/B test that my variant is 5% better than the original version?

You’ve finally made it. You prepared your test, you ran your test, you calculated that one version is significantly better than the other. Congratulations! The next question is probably by how much the winning version is better. Unfortunately, an A/B test only tells you that one version is better, it does not tell you anything about how much better this version is. There are mathematical tools to calculate the probability that B indeed performs at least 5% better than A given both versions’ test performances, but this analysis is beyond the scope of a classical A/B test and deserves its own blog entry.

Thank you, Alex, for answering these questions. Interested in joining our Engineering team? Check out our open positions.

.JPG)