Experimenting with Multidimensional Bayesian Statistics

Our product teams conduct experiments to understand and evaluate the impact of new features on our customers. In a nutshell, an experiment involves exposing a sample of our customers to different versions of the product and evaluating the change in target business metrics, typically funnel metrics.

Key takeaways:

Marco Venè, senior data scientist, and Laura Lahoz Gonzalez, data analyst, tackle different data challenges to support the business and product teams. One of our core responsibilities is planning and analyzing the results of experiments to guide decision making in product development.

In today’s post, they walk you through the implementation of Multidimensional Bayesian Statistics in their experiment analyses, the rollout of the project with an internal R Package and a Databricks dashboard and its impact on our product teams.

{{divider}}

Intro to Product Analytics experiments

Our product teams conduct experiments to understand and evaluate the impact of new features on our customers. In a nutshell, an experiment involves exposing a sample of our customers to different versions of the product and evaluating the change in target business metrics, typically funnel metrics.

Because our sample is split randomly into different groups, we can be confident that the observed difference in the metrics results from our changes to the product, rather than other external factors.

Experimentation is such a powerful tool to improve our product. We're committed to delivering the best possible product to our customers, so our product teams run several experiments each month.

As members of the Data Products and Data Analytics teams, we are involved in different phases of an experiment's lifecycles, such as:

- the delivery of insights for features ideation and prioritization

- the selection and definition of target metrics and experiment timelines

- the specification of data tracking requirements

- the experiment analysis and reporting

First we look at the challenges in the experiment analysis and reporting.

You may also be interested in: What’s a good F1 score?

What makes a good insight

As data experts, we want to deliver robust, actionable, and fast insights for our product stakeholders. Let’s go over these three aspects more in detail.

Robustness

By robustness, we mean that the insights should be accurate and statistically valid. As we mentioned above, an experiment works by randomly splitting a sample of customers into groups. At first glance, one could think that simply comparing the results of group A vs. group B would be enough to understand and report the impact of a feature release. For example, suppose we launch a new Filter menu design for 50% of our visitors, and we observe a conversion rate uplift of +2% after one day. In that case, we could conclude that our experiment is positive, roll out the feature to 100%, and move to the next experiment.

Unfortunately, we have to deal with the nature of random variability of events. For example, if I toss a coin twice and get two heads, we cannot be sure that the third toss would be another head. In AB testing, this means that just because we notice a +2% conversion rate uplift today, it does not mean that the uplift would be the same tomorrow. On the contrary, we may see a -3% decrease tomorrow or any other values.

You may also be interested in: Deploying and testing a Markov Chains model

Luckily, we can use statistical methods to estimate the random variability and derive confidence intervals for our uplift estimates that clarify the real impact of our changes, accounting for random variance.

The robustness of our results depends on the statistical validity of our approaches. In the next section, we will explain how we leveraged the Dirichlet distribution to evaluate the confidence interval of our target metric and the difference of this Bayesian approach over the frequentist approach.

Actionability

By actionability, we mean that product teams should be able to iterate based on the insights we produced. In an experimental context, actionability is often a function of the granularity of the insights. The more targeted and precise the insight is, the easier it will be for teams to develop the following product features. For example, in an AB test, Analysts could decide to report a metric uplift for B Vs. A and tell the product team which version is the winner statistically.

While evaluating the winning variation is the primary goal of an experiment, to keep iterating on our product, it is essential to understand the underlying factors that determined the outcome.

You might also be interested in: Selecting and designing a fractional attribution model

While AB testing helps in isolating the effect of single changes on a target metric, there are still mixed effects that we can investigate and account for. A classic example from our business is the different outcomes that a new feature can have for desktop and mobile platforms. For instance, let’s say that our new filter design delivered a significant +2% uplift, with a 95% confidence interval between 1% and 3%. Our result is robust, but is it actionable?

Surely, product teams can confidently roll the new filter design to 100% of our customers and enjoy the improved conversion rates, but how will we further improve the new filter design? What if we analyzed the conversion rate uplift broken down by Desktop and Mobile platform?

Such an analysis could results in observing:

- Desktop: +6%, with 95% confidence [5%-7%]

- Mobile: -4%, with 95% confidence [-6%-2%]

Is this result more actionable?

The answer is YES. Because now we can focus on iterating on the mobile version of our filter, maybe the new functionalities are great, but users cannot easily interact with it on a small screen, and we need to iterate on the designs.

That’s a different actionability than reporting the overall aggregate results. In the next section, we will detail the challenges of maintaining statistical robustness while disaggregating results and how we leveraged the Dirichlet distribution to tackle the challenges and deliver robust and actionable insights at very granular levels.

You might also be interested in: Running and scaling a synthetic AB testing platform for marketing

Speed

Our product teams roll out new features at a fast pace. As an agile tech company, we want to deliver a better product to our customers sooner than later. However, the data teams need time and a lot of data to both guarantee the robustness and actionability of results, which depends on sample sizes.

While we have limited control over the number of customers visiting GetYourGuide, we have complete control over data processes and reporting pipelines to deliver insights to our teams.

This means that we can automate the repetitive parts of our workflow to focus on the most fun and rewarding part of exploring product development opportunity areas and support product teams delivering the next features. In practice, once we launch an experiment, extracting the right data to produce robust, actionable insights should be straightforward and mostly automated.

The shorter the period between the end of an experiment and the delivery of the analysis of the results, the sooner a product team can embrace the learnings and work on the next iterations. Given that a product team runs multiple experiments a month and that we have multiple product teams in need of insights, delivering reports fast increases the time we spend on data-driven product development.

In the following sections, we will explain how wrapping our Multidimensional Bayesian Statistics methodology into an R package, and a dashboard helped share the technique with other analysts and run the analysis at scale.

Our Bayesian approach

To better understand how we tackled the statistical robustness and actionability challenges, let us introduce multidimensional Bayesian stats for our use case.

To understand how this works, we have to understand the one-dimensional case, first. This one is mainly based on the Beta Distribution. In an experiment analysis, the Beta Distribution allows modeling the probability of success, given the observed data. The Beta Distribution takes as parameters α and β, corresponding respectively to the observed number of successes + 1 and number of failures + 1, and outputs the posterior distribution of the probability of success, treating the probability of success as a random variable.

In a product experiment we typically count conversions as success and bounces as failures. These counts can be used as prior inputs for the derivation of Posterior Beta Distribution values of the conversion rate, i.e the probability of success.

(n conversions, n bounces) -> p(conversion rate)

By resampling from the Conversion Rate Posterior Beta Distribution, we can estimate credible intervals for the unknown true conversion rate.

For example, by drawing 100 samples from Beta(1,9), we get 100 values for probability of success, from which we could derive a 80% credible interval of lets say 0-20%. The larger the amount of trials and the success and failure counts, the smaller the variance of the posterior values estimates. For instance, 1,000 trials from Beta (100,900) would result in a much narrower 80% credible interval of 9-11%.

The Dirichlet distribution is a multivariate version of the Bayesian Beta Distribution.

It extends the Beta Distribution, by setting as prior an arbitrary number of buckets, instead of 2 like in the Beta, and deriving a random probability vector for the proportion of counts, which adds up to 100%.

(n counts) -> p(n counts)

In the example below you can observe five resampling trials from a Dirichlet distribution with 3 buckets A, B, C, with observed counts of 12, 3, 5 respectively for each bucket.

You can observe how the proportion of the first bucket is larger since since we count more A's than B's and C's in our observations. By increasing the counts in each bucket, the variance of each column would decrease since we get more confident about the true proportions.

In our experiment analysis, we leverage the properties of the Dirichlet Distribution for analyzing the performance of the metrics broken by some dimensions of interest.

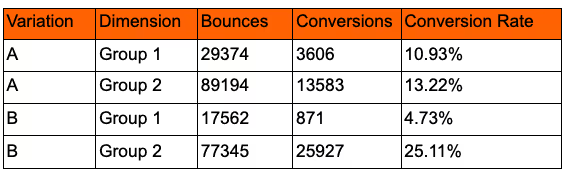

We first derive the counts of customer Bounces (failures) and Conversions (success), broken down by the Experiment Variation and another dimension of interest; this could be anything, for example, marketing channel or Customer Country.

We then reshape the data and pass as inputs to the Dirichlet Distribution the counts of Bounces and Conversions for each variation.

Drawing random samples from the distribution, we can derive the Posterior Distribution for each bucket and create the comparison of relevance. Below you can see the experiment results broken down by one of our funnel dimensions in Product Analytics. The faceted chart shows the posterior distribution of the target metric for each dimension and experiment variation.

By looking at the graph, we can see how the experiment's performance differs by dimension. This insight can point analysts in the right direction for further investigation of interaction effects between the experiment changes and business dimensions, enabling laser-focused product improvement recommendations.

As part of our Dirichlet toolkit, we also create a Bayesian p-value by computing the share of random resample trials where the test variation conversion beat the control. The metric allows product managers to discern with a clear yes/no the experiment winning variation, that would otherwise be more ambiguous by only looking at the posterior distribution differences.

You might also be interested in our podcast: An inside look at the Data Analytics team hiring process

Going into the statistical discussion of Bayesian and Frequentists methods is beyond the scope of this post. In addition, both methods are statistically robust and, in many circumstances, can be used interchangeably.

However, it is crucial to notice that we can skip some steps of the classic frequentist workflow in the Bayesian framework. Sample Size Analysis and Family Wise Error Rate adjustments for multiple testing are not necessary.

The Dirichlet multidimensional method's robustness to low sample sizes helped us in the covid period. The low traffic made it hard to test frequentist significance in a reasonable business timeframe, especially for dimensional splits.

Developing a full-stack solution

From the beginning, we knew that this idea could only deliver the expected impact if it were accompanied by a comprehensive and user-friendly implementation, both for other analysts and our product teams. Often, developing a solid analytical methodology is not enough to guarantee reproducibility and scalability to other projects. To achieve full power, we transitioned our methodology first to an R package and then to a self-service notebook that product managers and analysts nowadays use autonomously.

From a statistical method to an R package

To increase usability, it is crucial to abstract the method and create functions that can be understood and easily reused by others. At its core, an R package does just that.

Before jumping into the package functionalities, we walk you through the history of our collaboration from ideation to implementation.

You might also be interested in: Leveraging an event-driven architecture to build meaningful relationships

At GetYourGuide, every two months, we are encouraged to participate in HackDays, a perfect space for innovation where employees propose projects and collaborate with other colleagues on particular initiatives during two consecutive days. It was during one of those HackDays when we ideated an R package for internal team members.

The package was meant as a toolbox of functions that would help speed up the conduction of recurrent pieces of analysis, such as Year-over-Year metric comparison and opportunity sizing, while encouraging the standardization and sharing of analytical methods and their documentation.

During the first HackDay, we learned all about how to properly set up the files and create the package documentation using roxygen2 (mainly following R packages by Hadley Wickham as a reference). We added a data extraction and a data visualization function for each analysis type. We opened access to the library to anyone at GetYourGuide by placing it in a shared repository and uploading it to the cluster to be easily downloaded and used in Databricks.

We enjoyed collaborating on a shared analytical package in the Hackdays, where we pitched the project to the broader analytics team and advocated for its extension and adoption. Everyone was on board with the technology's potential, which motivated us to keep developing and sharing the progress.

You might also be interested in: 15 data science principles we live by

We worked on the package regularly and, of course, in subsequent Hackdays. By the time we decided to add the Bayesian Analysis features, we already had a functioning package skeleton integrated into Databricks and a fluid work dynamic that enabled us to iterate.

In addition to wrapping and sharing analytical methods, our package format also provided us other advantages such as creating documentation, version controlling, and collaborative development.

We organized our functions with logical naming and distinguished the functions to extract and prepare data from the analytical functions that ingest the data and output the analysis in charts and tables.

This allows us to control that the data preparation works as expected and that the data are clean for analysis while also enabling users to get familiar with the data input for the analysis, making the methods more transparent and easier to understand.

Currently, the package is private, and hence the code can be run on GetYourGuide controlled machines only.

Below is an example of how we run the code in Databricks notebooks.

# Load the library

library(library name)

# Read documentation about the method and the data

?get_data_experiment_by_group

?analyze_experiment_by_group

# Pull the experiment data

data <- get_data_experiment_by_group(...keep reading...)

# Run the Bayesian analysis

analyze_experiment_by_group(data)

How the package works

Let’s understand these functions more in detail.

The get_data_experiment_by_group preparation function takes as input the distinguishing characteristics of an experiment. Among others, this includes:

- The experiment ID defines the experiment uniquely.

- The success metric, defining the number used to evaluate the performance of the experiment. The definition of success varies from one experiment to another, especially during low traffic periods, when it takes longer to achieve enough sample size to assess standard metrics like conversion rate. In this context, other metrics such as the share of visitors adding to cart, checking availability, or visiting one of our Activity Detail Pages can become proxy success metrics.

- The assignment condition defining the users’ sample exposed to the experiment. For example, experiments are running only in a specific subset of locations. Only the visitors who perform a certain action are assigned, experiments with single vs. multiple assignment touchpoints, etc.

- The relevant dimension to split the analysis by. For example, the customer platform (i.e. desktop, mobile web, apps) determines the screen size, influencing the visibility and design of the tested feature changes, which often impacts results. Also, many other dimensions can be relevant for specific experiments only, such as visitor language, marketing channel, a path followed to reach a particular page, destination country, etc.

You might also be interested in: How we built our modern ETL pipeline

The function then uses the inputs to generate a SPARK SQL query computing the count of success and failures based on the success metrics, split by the chosen dimensions and the experiment variation, and outputs a data frame that can directly be inputted to our analysis function. This is the table that you already saw in the previous section.

When dimensions can take too many different values (take visitor language as an example), the data extraction function would already compute the five most relevant groups, according to the number of visitors, and group the rest. This is very handy when displaying the output in a chart.

The analyze_experiment_by_group analytical function takes only the prepared data as input and output the analysis in different formats, such as a density distributions comparisons chart (like the one displayed above) or a table with summary statistics, such as the probability of B outperforming A based on our resampling approach. As discussed above, this is the metric used by product teams to decide on the experiment winning variation, similar to the p-value.

The following section shares how we further integrated the R package in the GetYourGuide tech stack. Also, we discuss additional tooling that we developed on top of the package to share the methodology with product managers and business stakeholders.

Embedding the library functions into a self-service Databricks Notebook

Around Spring 2021, international travel started to pick up again, which meant increased traffic, achieving big enough sample sizes to detect significant uplifts in fewer days and, therefore, the ability to run more experiments in a shorter time frame and iterate faster.

To shorten time to insight and increase the level of analysis self-service among product managers, we decided to take one step further in improving the usability of the Multidimensional Bayesian Experiments functions.

We created a Databricks dashboard where product managers could configure their experiment analysis through a series of widgets where dimensions and metrics were expressed with business-friendly terminology.

The notebook then transforms the widget inputs into SPARK SQL queries and variable names that can be passed again to the package functions. We also developed the new functionality to automatically loop the analysis for multiple pre-selected dimensions to display multiple dimensional plots in a unique dashboard, further increasing insights generation power.

A real world example

To wrap up, we describe how we used the self-service dashboard to analyze a real experiment, testing a new feature on our activity pages. We replaced the real dimension values because of confidentiality.

As described above, first we select our analysis dimensions by a widget menu, along with target metrics and other details.

Then, the data extraction and preparation happens under the hood, and after a few seconds, graphs are automatically displayed.

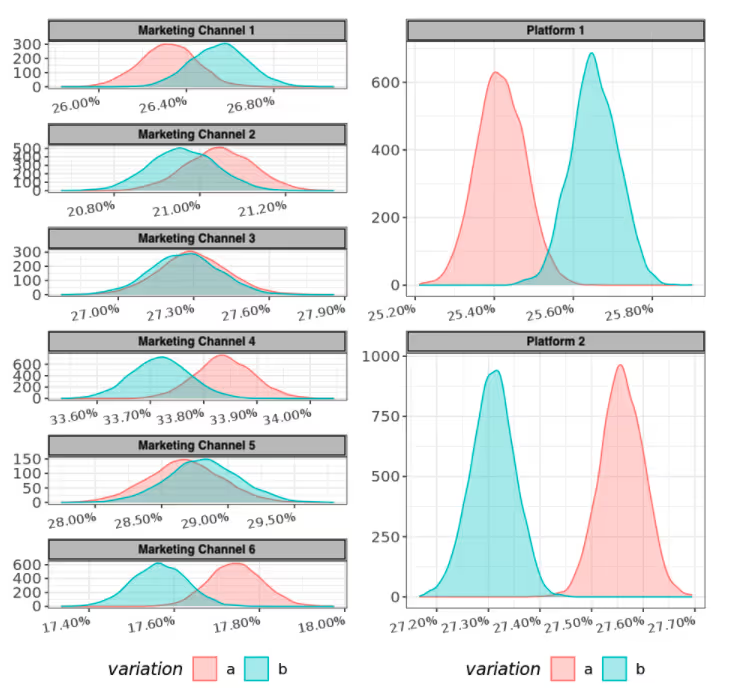

Below we show two example graphs.

You can observe the posterior distributions for our target metric on the left, split by variation A and B and the marketing channel. In the chart, we notice how certain channels such as 4 and 6 are driving the performance of B over A down — while the opposite is true for channel 1.

On the right, you can see a second visualization of the same data, split instead by the Customer platform. Analyzing multiple splits side by side, we can enrich the context of our analysis and derive additional and more precise insights.

In this case, we observe how the platform is a crucial performance differentiator, more than the marketing channel. Platform 1 is convincingly better in B, while platform A is going the other direction.

If we had reported only on the blended outcome here, we would have missed entirely that the experiment needed further iterations, mostly on platform 1.

This insight pointed us to further investigation and eventually allowed us to deliver targeted recommendations to our product team.

We hope this gave you a glance of the experiment analytics power at GetYourGuide and that you enjoyed reading about our methodology and implementation approach, as much as our product managers who love the