Powering traveler curiosity: the four pillars of ‘good’ Search at GetYourGuide

Power Search at GetYourGuide with the four pillars of “good” search—recall, precision, business value, and intuition. Learn how we improve search suggestions for 200K+ experiences using AI synonyms, typo tolerance (fuzzy matching), and smarter ranking to deliver relevant, intuitive results that drive discovery and conversions.

Key takeaways:

{{divider}}

At GetYourGuide, Search is a gateway to discovery. With over 200K experiences offered across 18K cities and millions of collections, helping users find exactly what they’re looking for — and what they didn’t know they wanted — is both a critical business function and a fascinating technical challenge.

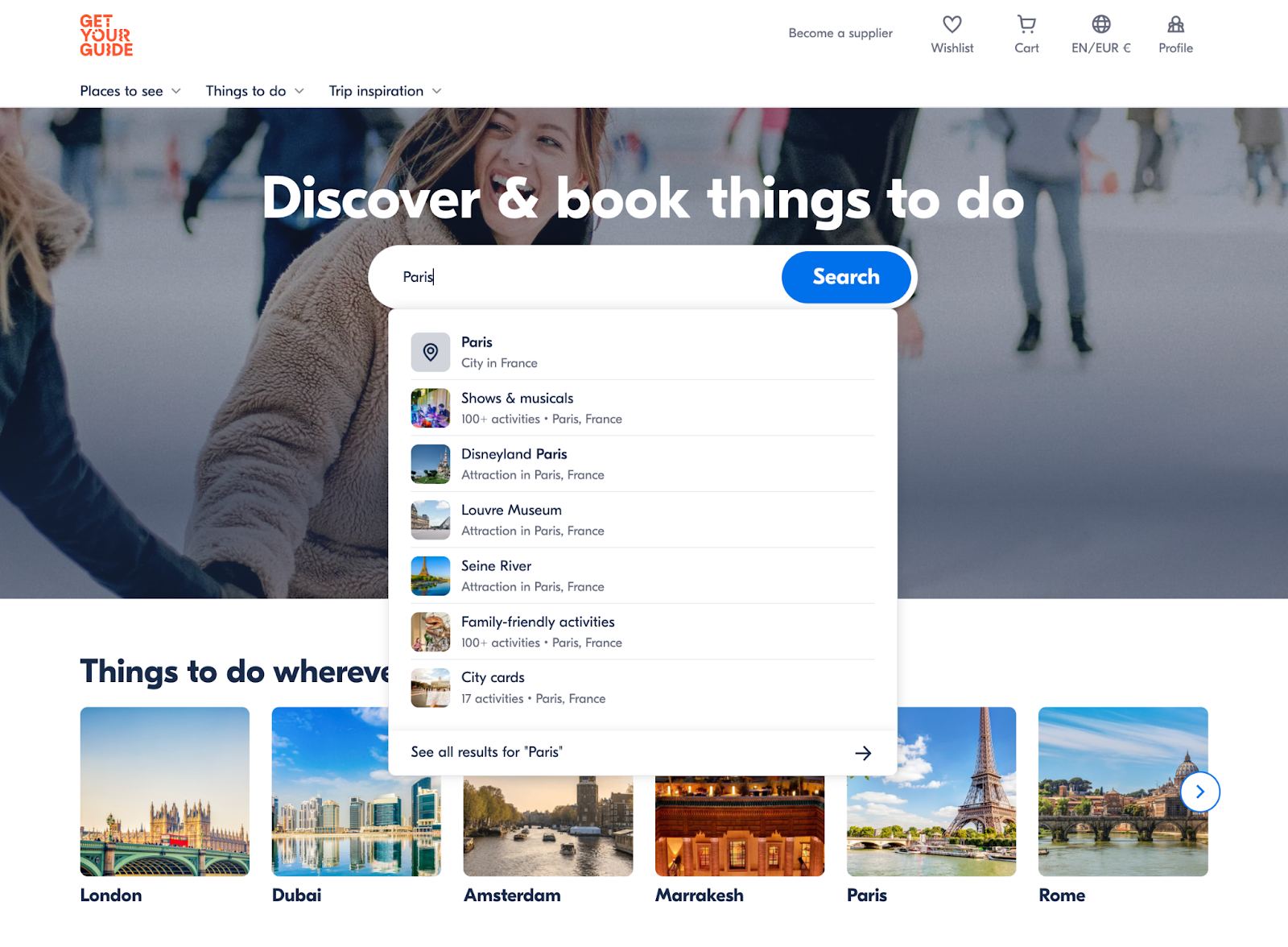

In the last few years, we’ve invested heavily in improving Search at GetYourGuide, and a core part of that has been our Search Suggestions, which are a key driver of exploration on our platform.

In this blog post, we’ll explore what enables effective, intuitive search functionality in our app and how we continually improve our systems to deliver the best results for our users.

Search at GetYourGuide operates in two core modes:

- Structured Search: These are precise SQL-like queries, such as “give me all the activities for Location ID X”.

- Unstructured Search: Natural language text queries like “explore Paris” that power our autocomplete and search results pages.

Unstructured Search is a fascinating challenge because it must match free-text queries to our vast inventory while delivering relevant, intuitive results. It’s not enough to just match on activities that contain the query text; we need to try to understand the search intent and serve relevant results — even when the user query leaves a lot to be desired. At GetYourGuide, we think about Search as four fundamental pillars.

The four pillars of ‘good’ Search

Pillar 1: Recall - cast a wide net

Recall is all about breadth. When a user searches for “Paris,” we need to find everything and anything remotely related to Paris. This is pretty easy to achieve with modern search engines, especially with simple queries like Paris. We start to encounter problems when the user types anything more complex, like “Explore Paris”.

The problem with basic recall

Traditionally, Search works by matching exact terms in queries to terms in our search index. To ensure accurate results, we use a logical AND Operator to return only suggestions that contain all the query terms. This approach has significant limitations. If we consider the search Explore Paris, we immediately ran into problems in our Search experience:

- We found exact term matching to be too restrictive. Our index had plenty of documents with the term Paris, but none contained the term Explore. As a result, we’d yield 0 results in our search.

- Users don’t always know the exact terms we use in our content. We have a collection of activities called Cruises & Boat Tours, and as GYG employees, we know how to find it since we know what it’s called. But should our users have to know our inventory to discover relevant activities?

- There’s no tolerance for typos here! Paris is easy to spell, but what about Reykjavik? We needed to allow some room for error, especially since our product focuses on exploring new locations (which are often hard to spell).

To resolve these problems, we had two core solutions.

Solution 1: Intelligent synonyms

A synonym is a word or phrase that means exactly or nearly the same thing as another word or phrase. They’re essential in Search as they allow our users to express themselves in their own words. They no longer need to know what words we use to describe our inventory. We leveraged AI to dramatically improve our synonym coverage using three straightforward steps:

- We prompted ChatGPT to generate 50 high-quality aliases for each collection of activities we had in our inventory.

- Then we indexed these Synonyms in OpenSearch as a new field in each Collection.

- We updated our search query to support searching on the actual collection name and its associated synonyms.

This approach proved to be remarkably successful - it was a low-effort approach that immediately expanded our users’ ability to search using their own words.

Solution 2: Typo tolerance

Typos are inevitable in user queries. Typo Tolerance is the practice of returning relevant search results even when the user query contains typos. There’s a powerful technique known as Fuzzy Matching that allows us to find similar, but not identical, strings to the user query. We can leverage this to match user queries with Typos to suggestions that look similar to the query.

Codeblock 1 - Using Fuzzy Queries to match queries with Typos

It’s important to note that Fuzzy Matching does not imply the Search Engine has any real understanding of the user query; it’s a probabilistic model. Opensearch (through Apache Lucene) provides first-class support for Fuzzy Matching out of the box. This means we could quickly leverage Fuzzy to support Typo Tolerance in our Search Suggestions. The combination of synonyms and Typo Tolerance improved our recall, but it introduced a new challenge: precision.

Pillar 2: Precision - quality over quantity

Precision measures how many of our results are actually relevant. With only 7 slots available to Search Suggestions and millions of items to choose from, precision becomes critical. High recall without precision leads to information overload and an inferior user experience.

The precision challenge

When we increased recall by using synonyms and typo tolerance, we began returning too many results that were loosely related. Users searching for specific experiences were overwhelmed with marginally relevant options. We needed to iterate on our Typo Tolerance solution.

Introducing finessed Typo Tolerance™

We needed a way to balance improved recall through Synonyms & Typo Tolerance with precision, so we could be confident our users were seeing the most relevant suggestions for their queries. We phrased the problem as: “We only want to show our users similar matches through typo tolerance when exact matching fails”. We achieved this by performing two separate search queries: one with fuzzy enabled and one without. We opted to use a single query and leverage OpenSearch’s Disjunction Max Query with two query clauses. We then used the boost parameter to heavily penalise the fuzzy matches. This ensured that fuzzy matches scored so poorly that we never showed them above more precise matches.

Codeblock 2 - Using a DisMax Query to Enable Fuzzy Matching

This approach generally means we maintain high precision while ensuring users still get helpful results even when their queries don’t match exactly.

Pillar 3: Business value - strategic result ordering

Not all search results are equal from a business perspective. It’s not enough to just show the best matching suggestions from a lexical perspective. We need to balance our beautiful matching tech with the Search bar’s ability to drive conversion. As mentioned previously, we have millions of documents in our inventory, but we only have 7 slots for suggestions. So how do we decide what to show the user?

We found we needed to go beyond just text matching; we needed a way to represent user interest in each of our products. For example, given 10 results for Paris Walking Tours, how do we decide which one to show? From the activity content, they’ll all be reasonably similar. They’ll all involve walking in Paris, and probably view the same sights. You could sort by price, but does a lower price always mean better? Probably not. Also, search suggestions are a great way to inspire searches! It’s helpful to show tangentially related results so our users know what else they can find in their destination of choice. To improve the ordering of our suggestions, we associated popularity scores with each item in our inventory. These popularity scores serve as a simple rubric for choosing among the 10 hypothetical walking tours. Each popularity score was normalized between 0 & 1, and could be very handily used by Opensearch’s rank_feature query. This query allowed the popularity score to influence the final result set.

Codeblock 3 - Using Rank Feature to influence ordering of Suggestion results

While this approach worked well to ensure we were serving suggestions that were good for the business, we quickly discovered that the popularity score could also dominate the Search Results. The most egregious example is when you type Porto, but see results for Paris because Paris is way more popular than Porto. All of a sudden, we found our suggestions lacked intuition.

Pillar 4: Intuition - results must make sense

Business optimization can compromise user intuition. When business ranking dominated results, suggestions became unintuitive and confusing. Users couldn’t understand why certain results appeared, leading to a poor user experience. We needed to find a way to balance business objectives with user expectations. Through several experiments and internal testing, we found that:

- Results need to make intuitive sense to the user. Suggestions should clearly connect to a user’s Search Query; otherwise, the experience quickly becomes frustrating.

- Business considerations should be a secondary ranking factor, after we’ve identified suitable suggestion candidates for the user’s query.

There’s no silver bullet for building this pillar; it requires trial and error (or a well-trained Machine Learning Model) to define a formula that limits the effect of our business’s popularity scores while still influencing our result set & drives growth. In our case, a simple first solution was to reduce the boost value for the rank feature.

Codeblock 4 - Using Rank Feature to influence ordering of Suggestion results

The challenge of balance

These four pillars need constant attention and adjustment to maintain ‘good’ Search. Focusing too much on one can cause cracks in another. It’s a constant balancing act where if you:

- Improve recall too much, and precision suffers

- Optimize for business value, and intuition breaks down

- Focus solely on precision, and you miss discovery opportunities

- Prioritize intuition over business needs, and you lose strategic value

Looking ahead

The work outlined in this blog has helped us build the foundations of ‘good’ Search suggestions here at GetYourGuide. As we look to the future, we intend to continually assess the performance of our suggestions and leverage the latest tools to improve them further

In conclusion

Building ‘good’ Search is about more than just returning relevant results; it’s about understanding the complex interplay between user needs, business objectives, technical capabilities, and human psychology. Our work on Search is never ‘finished’ — we’re continually innovating to create a search experience that delights our users, drives business value, and delivers unforgettable experiences to millions of travelers worldwide.

Interested in growing your career alongside our stellar tech team? Discover more about how we work here, and check out our open roles to find your best fit.

.JPG)