How We Find and Fix OOM and Memory Leaks in Java Services

For live statistics of resource usage, we take advantage of the Datadog tool. Simultaneously, for deeper analysis of the heap allocations, we use the Jemalloc library paired with the jeprof standard Java profiler.

Key takeaways:

Constantin Șerban-Rădoi, a senior backend engineer in the Fintech team works on various projects including the checkout and payments systems. As part of the company’s efforts to move away from a monolith his team is trying to extract the business logic into separate microservices piece by piece. He shares how his team solved a memory usage issue they faced while extracting an image processing microservice.

The most recently extracted microservice is an image processing service that resizes, crops, re-encodes, and performs other processing operations on images. This service is a Java application built with Spring Boot in a Docker container and deployed to our Kubernetes cluster hosted in AWS. While implementing this service, we stumbled upon an enormous problem: the service had issues with memory usage. This article will discuss our approach to identifying and fixing these problems. I'll begin with a brief introduction to general memory issues, and then dive deep into our process for solving this problem.

I thought it was a memory leak. But, with a mixture of patience, intuition, and meticulously using the memory analyzer (MAT), my "aha" moment came when I compared the memory usage from one snapshot to another, and realized the problem was the number of threads spawned by Spring Boot

An overview of memory issues

There are many kinds of errors that directly or indirectly affect an application. This post focuses on two of these issues: the OOM (out of memory) errors and memory leaks. Investigating these kinds of errors can be a daunting task, and we will go through the steps we took to fix one such error in a service that we have developed.

Here’s an overview of the topics we’ll look at today:

- Understanding OOM errors and identifying their causes

- Understanding memory leaks

- Main causes of memory leaks

- Case Study

- Troubleshooting

- Tools for identifying memory issues

1. Understanding OOM errors and identifying their causes

OOM errors represent the first category of memory issues. It boils down to an application that tries to allocate memory on the heap. Still, for various reasons, the operating system or the virtual machine (for a JVM application) cannot fulfill that request, and as a result, the application’s process stops immediately.

What makes it very difficult to identify and fix is the fact that it can happen anytime from any place in the code. Therefore, it is very often not enough to look at some logs to identify the exact line of code that triggers it. Some of the most frequent causes are:

- The application needs more memory than the OS can offer. Sometimes this can be fixed by simply adding more RAM. However, there’s a limit to how much RAM you can add on a machine, so one should try to avoid excessive memory usage whenever possible.

- The Java application uses the default JVM memory limits, which are typically rather conservative. As the application grows in size and more features are added, it eventually exceeds that limit and will be killed by the JVM.

- There is a memory leak, which for a long-running process, will lead to not having enough resources eventually.

There are various open-source and closed-source tools for checking the memory usage of a process and how it evolves. We will discuss these tools in a later section.

You might also be interested in: Implementing a reliable library for currency conversion

2. Understanding memory leaks

Let’s first understand what a memory leak is. A memory leak is a type of resource leak that occurs when there is a failure in a program to release discarded memory, causing impaired performance or failure. It may also happen when an object is stored in memory but can’t be accessed by the running code.

This sounds rather abstract, but what does a memory leak actually look like in real life? Let’s look at a typical example of a memory leak in an application written in a language with garbage collection (GC).

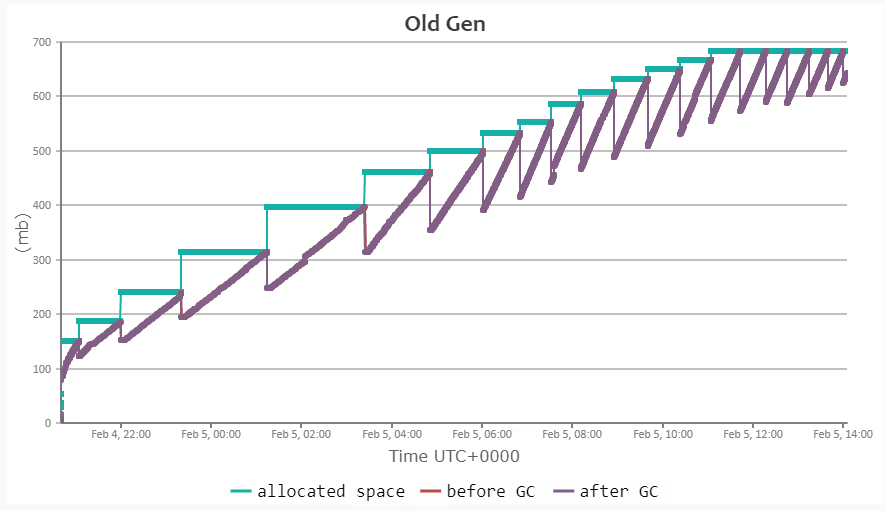

The graph shows the memory pattern of the Old Gen memory (long-lived objects). The green line shows the allocated memory, the purple line shows the actual memory usage after the GC executed the sweeping phase for Old Gen memory and the vertical red lines show the difference between the memory usage before and after the GC step.

As you can see in this example, each garbage collection step slightly reduces the memory usage, but overall the allocated space grows over time. This pattern indicates that not all the allocated memory could be freed.

3. Main causes of memory leaks

There can be several causes for memory leaks. We will discuss the most common ones here. The first and probably most easily overlooked cause is the misuse of static fields. In a Java application, static fields live in memory as long as the owner class is loaded in the Java Virtual Machine (JVM). If the class itself is static, then the class will be loaded for the entirety of the program execution, thus neither the class nor the static fields will ever be garbage collected.

The actual fix for the problem was surprisingly simple. We chose to override the default thread pool from 200 to 16 threads.

Unclosed streams and connections represent another cause for memory leaks. In general, the operating system only allows a limited number of open file streams, so if an application forgets to close these file streams, it will eventually become impossible to open new files after a while.

Similarly, the number of permitted open connections is also limited. If one connects to a database but does not close it, after a certain number of such connections are opened it will reach the global limit. After this point, the application will not be able to talk to the database anymore because it cannot open new connections.

You might also be interested in: The art of scaling booking reference numbers at GetYourGuide

Finally, the last major cause for memory leaks is represented by the non-freed native objects. A Java application that uses a native library can pretty easily bump into memory leaks if the native library itself has leaks. These sorts of leaks are the most difficult to debug in general because most of the time, you don’t necessarily own the code of the native library and are usually using it as a black box.

Another aspect about native library memory leaks is that the JVMs garbage collector does not even know about the heap memory allocated by the native library. Thus, one is stuck with using barebone-tools to troubleshoot such leaks.

Alright, enough with the theory. Let’s look at a real-world scenario:

4. Case study - Fixing OOM issues in the Image Processing Service

Status quo

As described in the introduction section, we have been working on an image processing service. Here is how the memory usage pattern looked like during the initial phase of development:

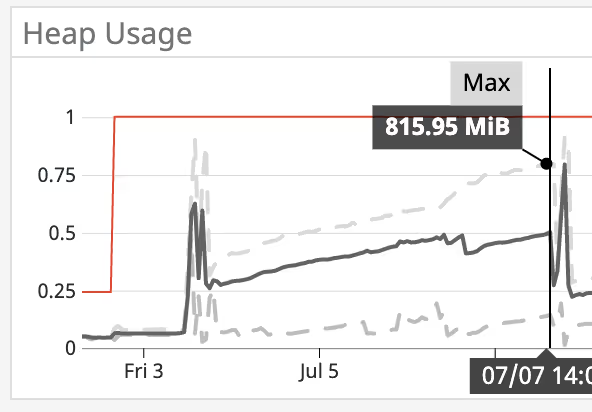

In this image, the numbers on the Y-axis represented GiBs of RAM. The red line represents the absolute maximum that the JVM can use for heap memory (1GiB). The dark grey line represents the average actual heap usage over time, and the dashed grey lines represent the minimum and maximum actual heap usage over time. The spikes at the beginning and the end represent the times when the application was being re-deployed so they can be ignored for the purpose of this example.

The graph shows a clear trend as it starts from roughly 300MiB of heap needed and grows all the way to over 800MiB in just a couple of days when the application, that runs within a Docker container, would be killed due to OOM.

To better illustrate the situation, let’s also look at other metrics of the application during that same period of time.

Looking at this graph, the only indication of a memory leak was that heap usage and GC old gen size grew over time. When the heap space usage reached 1GiB, the Kubernetes pod where our Docker container was running was getting killed. Every other metric looked stable: the thread count was sitting constantly at slightly under 40, the number of loaded classes was stable as well, and so was the non-heap usage.

The only variable piece that is missing from these charts is the garbage collection time. It increased proportionally to the allocated memory on the heap. This meant the response time was getting slower and slower the longer the application was running.

5. Troubleshooting

The first step we took in trying to address the problem was to make sure that all streams and connections are getting closed. There were some corner cases we did not initially cover. However, nothing changed. We were observing the exact same behavior as before. This meant we had to dig even deeper.

The next step was to look at the native memory usage and make sure everything that is allocated gets freed eventually. The OpenCV library that we use to do the heavy lifting for our service is not a Java library, but a native C++ library. It provides a Java native interface that we can use in our application.

Because we knew it is possible that OpenCV can leak Java native memory, we made sure all OpenCV Mat objects are released and called the GC explicitly before sending back a response. There was still no clear leak indicator and nothing changed in the memory usage pattern.

With no clear indication so far, it was time to further analyze the memory usage with dedicated tools. First, we looked at the memory dumps in a memory profiler tool.

The first dump was generated right after the application was started and had only a few requests. The second dump was generated right before the application reached 1GiB of heap usage. We analyzed what was allocated and what could pose an issue in both instances. There was nothing out of the ordinary at first sight.

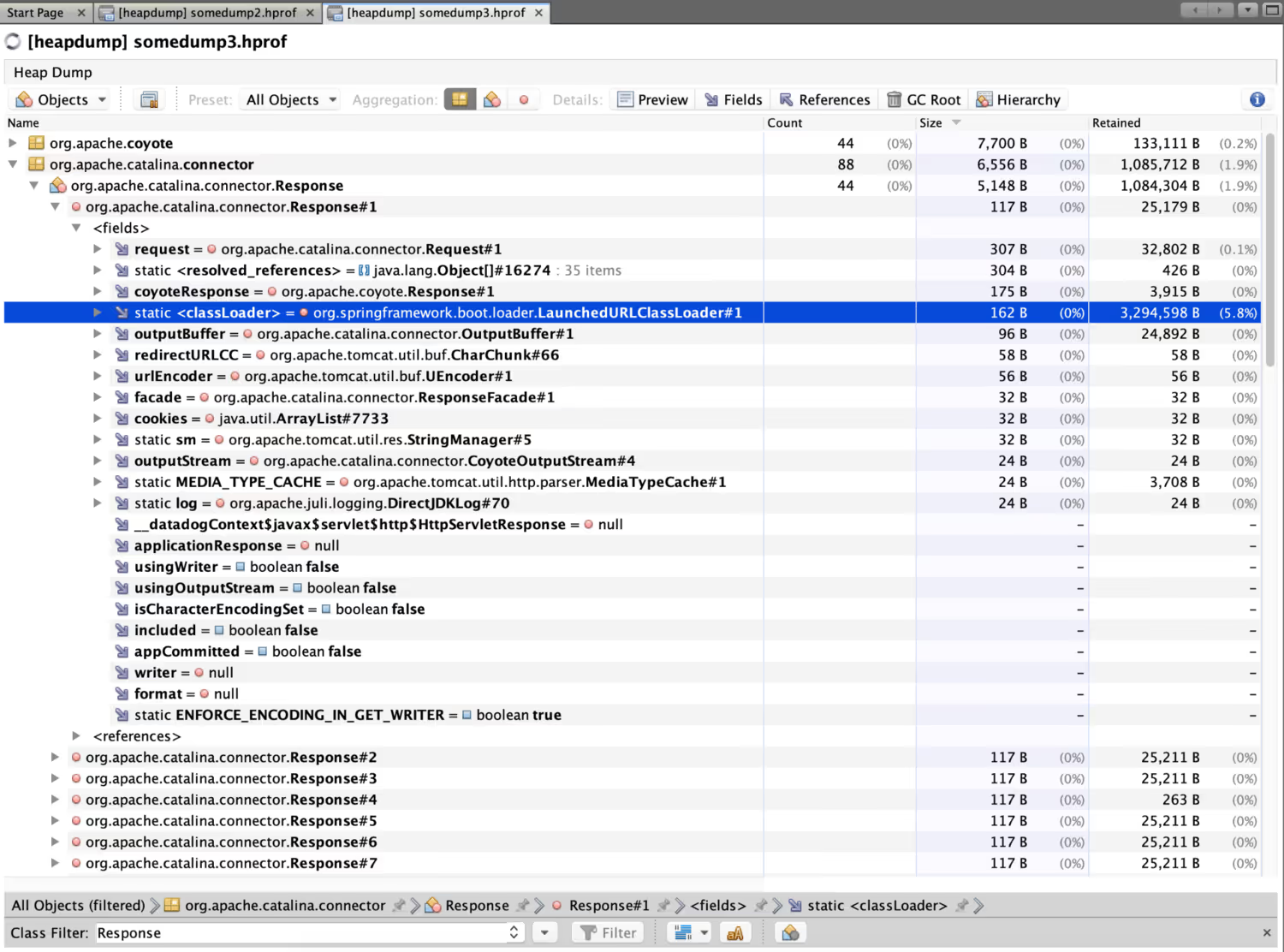

Then, we decided to compare the objects on the heap that required the most memory. To our surprise, we had quite many requests and response objects stored on the heap. This was the “bingo” moment.

Looking a bit deeper at this memory dump, we saw that we had 44 response objects stored on the heap which was much higher than in the initial dump. Each of these 44 response objects actually stored their own LaunchedURLClassLoader, because it’s in a separate thread. Every single one of these objects had a retained memory size in excess of 3MiB.

Then it clicked. We allowed the application to use way too many threads for our use case. By default, a Spring Boot application uses a thread pool of size 200 to process web requests. This proved to be way too large for the purpose of our service since it needs several MB for each request/response to hold the original/resized images in memory. Because threads are only created on demand, the application begins with a small heap usage, but grows higher and higher with every new request.

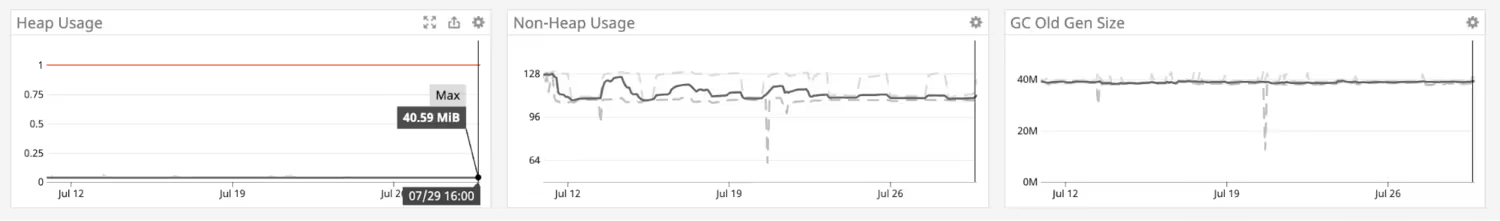

The actual fix for the problem was surprisingly simple. We chose to override the default thread pool from 200 to 16 threads. This solved our memory issues for good. Now the heap is finally stable, and as a consequence GC is also faster.

6. Tools for identifying memory issues

In the process of investigating and troubleshooting this issue, we used several tools that proved to be essential:

Datadog

The first tool we already had at hand was the DataDog APM dashboard for JVM metrics which was very easy to use and allowed us to obtain the above graphs and dashboards.

Jemalloc together with jeprof

Another tool we used for analyzing the heap usage and the native memory usage was the usage of the jemalloc library to profile calls to malloc. In order to be able to use jemalloc one needs to install it using apt-get install libjemalloc-dev and then inject it at runtime into the Java application:

LD_PRELOAD=/usr/lib/x86_64-linux-gnu/libjemalloc.so MALLOC_CONF=prof:true,lg_prof_interval:30,lg_prof_sample:17 java [arguments omitted for simplicity ...] -jar imageprocessing.jar

To sum it up, I thought it was a memory leak. But, with a mixture of patience, intuition, and meticulously using the memory analyzer (MAT), my "aha" moment came when I compared the memory usage from one snapshot to another, and realized the problem was the number of threads spawned by Spring Boot.

If you are interested in joining our engineering team, check out our open positions.

.JPG)