GetYourGuide’s Clickstream Data Collector - A Move From Akka To Pekko

Discover how GetYourGuide optimized its clickstream data collection by transitioning from the Akka framework to its public fork, Pekko. Dive into the challenges faced, solutions implemented, and the benefits of this migration for enhanced customer experiences.

Key takeaways:

Robert Bemmann, and Bora Kaplan, Senior Data Engineers at GetYourGuide in Berlin, share the migration process of the Analytics Pipeline Collector from Akka to its open-source fork, Apache Pekko. They shed light on the rationale behind the transition, the technical complexities encountered, and share valuable insights that can serve as a guide for similar migrations.

{{divider}}

At Getyourguide we collect clickstream data (events data) on a large scale to improve the customer journey and bring incredible experiences to everyone. We, as the data platform, ensure that each component of the whole events pipeline is working smoothly and reliably in a scalable and fault-tolerant manner. The first component of the pipeline, the Analytics Pipeline Collector, collects a variety of different analytical events from all kinds of producers throughout our website.

Written with the Akka framework - one of the most popular open-source frameworks in scala and java - we had to migrate the application just recently to its public fork Pekko due to a license change from Open Source to Business License Source. We also want to take this opportunity to thank all of the hardworking contributors that made this release of Pekko possible.

The analytics pipeline collector built with Akka

The Analytics Pipeline Collector is an HTTP API that validates and forwards analytical events to Kafka. This service is part of the Core Data Platform's Analytics Pipeline, representing the event collection part, and it's used by GetYourGuide's website and Native Apps. The collector receives between 200 to 300 million events per day. It was built in scala using Akka modules such as akka-slf4j, akka-stream, akka-http and akka-http-spray-json. The application is deployed on Kubernetes and configured to scale automatically whenever the influx increases.

Initially we chose an Akka HTTP server as the backbone for our Collector because of these 3 main advantages:

- Asynchronous and Non-blocking: Akka HTTP is built around asynchronous and non-blocking principles. This means it can handle a large number of concurrent connections and requests without blocking threads, leading to highly responsive and scalable servers. Asynchronous handling of requests is crucial for modern web applications where responsiveness and low latency are essential.

- Integration with Akka: The seamless integration with the Akka actor system is a major advantage. Akka actors provide a powerful concurrency model that simplifies the development of concurrent and distributed systems. With Akka HTTP, you can leverage actors for handling HTTP requests and responses, making it easier to build complex, stateful, and fault-tolerant applications.

- High-level Routing DSL: Akka HTTP offers a high-level Routing DSL that allows you to define your API routes in a concise and expressive manner. This DSL simplifies the process of defining RESTful endpoints and handling complex routing logic. It's particularly useful for building clean and maintainable APIs.

We did not consider any other framework, as there was no need for a rewrite, although we looked briefly into Tapir as an alternative. But just using Pekko as a replacement for Akka seemed to be the best option, as the pre-migration deployment was stable, scalable and resilient.

Why we think this is useful

We think a lot of applications that were built with Akka will consider migrating to the OS fork Apache Pekko before using the BSL version from Ligthbend. As we migrated the Collector just recently (built with multiple components from Akka such as HTTP, Stream, etc.), we thought it may be helpful to share our learnings and give an estimation of the actual complexity. Even though the migration guides are very detailed, we still ran into a few issues that we think are worth mentioning.

Following the migration guides

Initially, we were following the official Pekko migration guide.

- At the time of the migration (August 2023) we were using scala 2.13.5. It is recommended to migrate from Akka 2.6 to Pekko 1.0.0 as Apache Pekko is based on the latest version of Akka in the v2.6.x series.

- In general, the change for the groupId of the libraries is “org.apache.pekko” instead of “com.typesafe.akka”.

- Changing the imports was straightforward: Pekko packages start with “org.apache.pekko” instead of “akka” (e.g. import org.apache.pekko.actor.ActorSystem instead of import akka.actor.ActorSystem)

- Some ports have changed, such as the default port for Akka management from 8558 to Pekko’s 7626 (docs) as well as ports for Pekko Classic Remoting and Pekko Artery Remoting

- Config names use “pekko” prefix instead of “akka”, e.g. pekko.actor.provider instead of akka.actor.provider

We went pretty far with these code changes already, but still had some small, yet painful to debug problems.

Problems we've faced during the migration

Mapping the libraries to update the build.sbt file

Usually all pekko libraries should use the same version (i.e. 1.0.0 at the time of the migration).

We recommend keeping a pekkoVersion variable in your build file, and re-use it for all included modules, so when you upgrade you can simply change it in this one place.

When we followed this advice we ended up with an exception stating “Mixed versioning is not allowed”:

See also: https://pekko.apache.org/docs/pekko/current/common/binary-compatibility-rules.html#mixed-versioning-is-not-allowed

In our case we had to use version 1.0.1 for pekko-slf4j library, that fixed the issue.

Another approach would be using a sbt plugin and printing out a graph of dependencies that helps identifying the problematic dependencies. Add this to your project/plugins.sbt file

addSbtPlugin("net.virtual-void" % "sbt-dependency-graph" % "0.10.0-RC1")

Then run

sbt dependencyBrowseGraph

Which shows something like this:

Based on this information you could use the excludeAll method in your build.sbt. excludeAll is useful when you want fine-grained control over your project's dependencies and need to exclude specific transitive dependencies that may cause conflicts or compatibility issues.

Almost all modules that we used had been moved to the new pekko codebase, even custom ones such as akka-http-circe and akka-http-cors. In our case the previous-to-current dependency mapping looks like this:

Pekko Configs

Take some special attention to the configs. We spend quite some time figuring out why our code was compiling, but units tests and local endpoints were not working properly. Compare all Akka configs in detail to the respective Pekko counterpart. In our case it turned out that two configs were not named correctly, so it was not recognized.

Example 1 - small differences with characters

Pekko-http-cors.conf file

Character naming convention in config for Akka HTTP CORS was not streamlined to the convention used in Akka, so we when moved to Pekko, the config was not recognized: “pekko.http.cors” instead of “akka-http-cors” (pekko vs. akka)

Example 2 - naming deviations

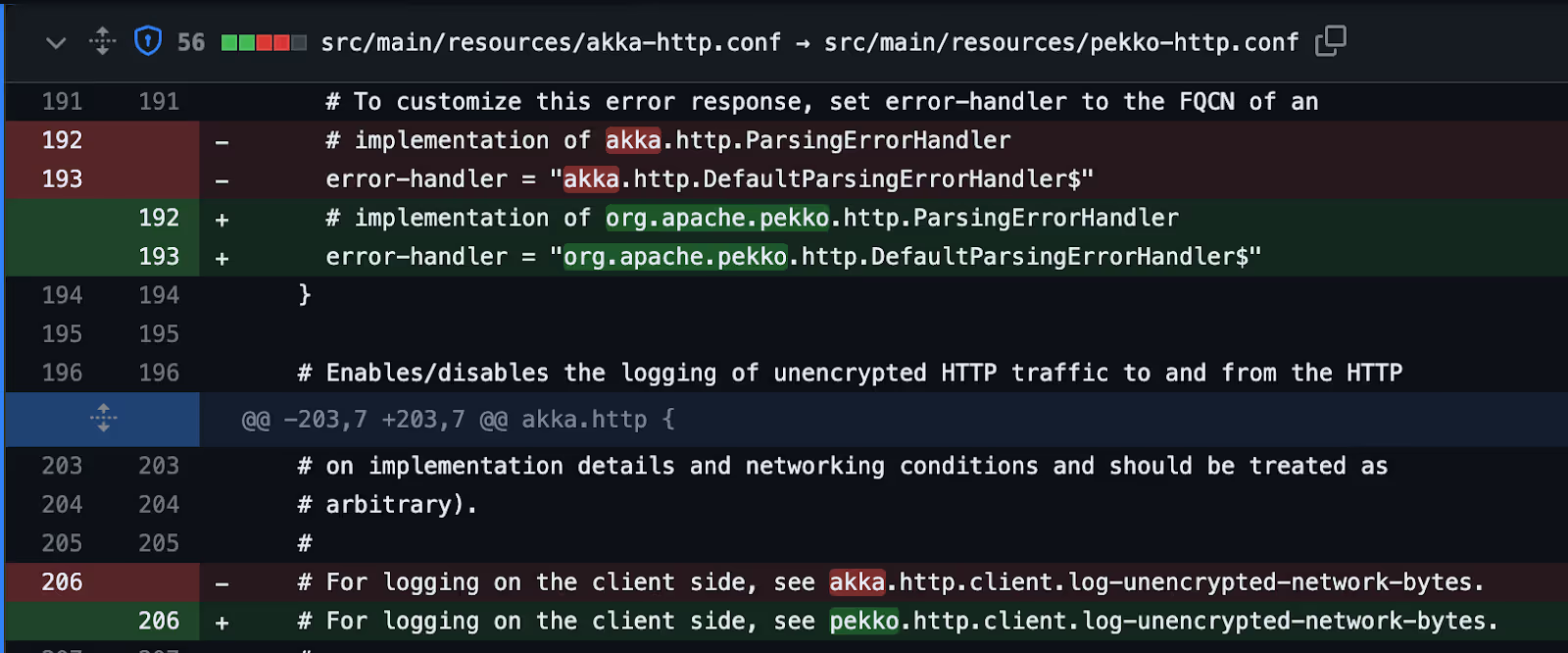

Pekko-http.conf file

Make sure to spot the classes in the config and not simply replace the “akka” with “pekko”

Telemetry with Kamon

We used Kamon Telemetry for tracing and measuring latency of the collector. By utilizing Kamon Telemetry for JVM, you gain the ability to gather metrics, seamlessly transmit context across threads and services, and effortlessly obtain distributed traces. At the time of writing Kamon did not release version 2.7.0 which contains support for Pekko. As the Kamon implementation is lean, we don’t expect big issues for these changes.

Helpful resources

Pekko migration guides, General Pekko docs, Pekko Management, this Zalando nakadi PR was helpful for me as an initial reference, and source code of the Pekko project on GitHub (for other modules, see the table above).

Conclusion

I’m citing one of the Pekko contributors from a Reddit thread here: “Generally speaking if you are using Akka on the latest version before the license change there shouldn't be any issues in migrating aside from the expected class/package/import/config name changes from akka to pekko.”

This was true also for our migration - except for the few edge cases mentioned above. After we were able to resolve them, we were unblocked and our application worked as expected and as before. In total, we roughly spent 2 days (1 FTE) for investigation and code changes and 2 days for testing (support for different Content-Types and all endpoints) and the actual rollout.

As a wrap-up, our learnings, in short, were:

- Start with Pekko migration guides, main changes are the groupId of the libraries, the import prefix (“org.apache.pekko” instead of “akka”) as well as some default ports

- Some libraries that were previously external, were now integrated into the Pekko project (e.g. pekko-http-cors, pekko-http-circe)

- Unit tests should uncover version incompatibilities between the pekko modules (in our case sl4j and testkit)

- Check your existing configs carefully and whether some naming conventions have changed, some custom akka libraries were integrated into the Pekko project and the character naming conventions have been streamlined. Also simple string replacement “akka” to “pekko” may not catch classes in the config.

.JPG)