Migrating the Pipeline: Tracking Clickstream Events

How a year-long migration to improve the pipeline that tracks our event data called for cross-team collaboration, clear communication, and continued stakeholder support.

Key takeaways:

Berlin-based Data Engineer, Bora Kaplan, shares how a year-long migration to improve the pipeline that tracks our event data called for cross-team collaboration, clear communication, and continued stakeholder support.

{{divider}}

Tracking user actions is critical for any business to understand how users behave on the application. It helps us to improve the user experience and make data-driven decisions.

It’s our mission at GetYourGuide to bring incredible experiences to everyone. To be able to deliver just that, we have to learn what makes it incredible for our users. We need a dedicated pipeline and services built around it for this purpose specifically.

As data platform engineers, we are responsible for providing a framework to anyone who wants to work with data. That includes clickstream data or as we refer to it, events data. As our users go through our websites or apps, we track their actions and capture events. Just to illustrate what kind of data we’re talking about in this post, here’s a simplified example for CurrencyChangeAction event:

{

"currency": "GBP",

"old_currency": "GBP",

"new_currency": "EUR",

"producer_properties": ,

"enriched_properties":

There are five different types of events:

- PageRequest/View Events: These are page view events which are fired whenever a user lands on a website page or navigates to a view on the native apps. For example, HomePageRequest, DiscoveryView, etc.

- Action Events: These are events fired by user-initiated actions and usually describe either a business process, or a meaningful interaction. For example, BookAction, CurrencyChangeAction, etc.

- UI Events: Generic user actions generated by operating on the UI. For example, UIClick, UISlide, etc.

- Error Events: These are tracked when the user encounters an error. For example, AvailabilityError, PaymentError.

- Other Types of Events: These are events that don't fit into the first four categories. For example, ExperimentImpression, AttributionTracking.

At the time of writing, we have 267 unique events flowing through our pipelines. On average, we receive about 2,500 events per second, or 216 million events per day through our ingestion service.

Events data is very valuable for the application writers to ensure the health of their services. Our data analysts, business intelligence engineers, and even product managers use this data to run analyses to build dashboards and generate reports. Data scientists use this data to build machine learning models for our recommendation systems to serve people with better search results and related activities. It also powers our AB experimentation platform and our marketing attribution model.

Last year we completed our migration to the new platform. It was a major project, requiring close collaboration with every one of GetYourGuide’s engineering teams. In this post we will go through how we improved our pipeline and data quality, and also look at the challenges that we faced while running a year-long migration.

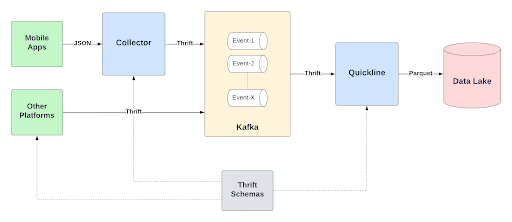

Old Architecture

The old architecture was based on Thrift. Schemas were created as Thrift definitions and the incoming JSON payload would be converted to Thrift and then sent to Kafka. From there, it would be consumed and converted into Parquet to end up in our data lake.

Components

- Collector - The ingestion service, written in Scala with Akka Http. It receives event payloads in JSON, converts them to Thrift and validates the schema. It then does basic enrichment (e.g., geolocation data) and publishes the event to Kafka.

- Kafka - Communication layer between our applications. Events are mostly published by Collector while some producers bypass it and publish to Kafka directly. Each event gets its own topic.

- Quickline - A simple Kafka consumer written in Java. It consumes all the event topics, converts them to parquet using Thrift schemas, then dumps them to S3.

- Thrift Schemas - Our monorepo containing all Thrift definitions. When there is an update, our CI/CD pipeline will trigger a new build for Collector and Quickline to consume the updated schema definitions.

Strengths

- Simpler architecture, easier to implement and maintain. Applications would send mostly complete payloads to Collector or Kafka directly. This means that data flow is very simple.

- Thrift enables us to have some schema control (but not perfect) on the data.

- Basic data enrichment directly done in Collector. There is no downstream pipeline to enrich data further. Payloads contain almost all the data required.

- Some applications can bypass Collector and send to Kafka directly. This leads to better results for producer applications with heavy loads.

Weaknesses

- Our team became a bottleneck controlling the schemas. Thrift schemas were centralized in a single repository. This meant that every change would have to go through us. So not great ownership across producer teams.

- Very relaxed schema definitions. Multiple applications were using the same definitions and sending the same event with different properties. We were not able to enable strictness on the data. Data quality suffers when everything is essentially nullable.

- Lower data quality because of lack of ownership. This also meant monitoring the overall pipeline was not easy. There was no defined process for producers to follow through with their events after creation.

- Everyone being able to bypass Collector meant that the only thing we could enforce was the Thrift schema on the events payload on Kafka messages. Not so great for enriching the data or monitoring the flow.

- Thrift being a non-human readable text, makes it hard for developers to debug.

- Collector doing all the work means long response times, which is not great for producer applications.

Given the weaknesses, it was obvious that we needed an improved system. But there was also another reason that triggered the migration: the switch to micro-service architecture within all engineering teams.

{{quote}}

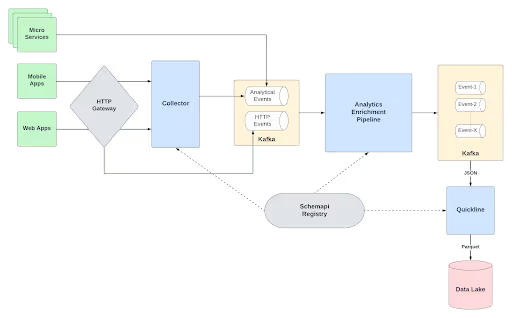

New Architecture

The new architecture is more complex with an additional new component and another layer of topics on Kafka. Ingestion is mostly owned by Collector but we still enable some services to bypass it and publish to Kafka directly – but this time with an SDK that we designed.

Thrift is replaced with JSON everywhere and we are using OpenAPI and AsyncAPI to define our schemas. We now have another layer for enrichment which brings much richer events with an additional source of data (HTTP events) coming directly from our Gateway.

Updated Components

- Collector - It’s still our ingestion service. But in this iteration it’s much more lightweight. It just receives events to validate their schemas and publish to an intermediate topic for analytical events.

- Analytics Enrichment Pipeline - Is the main part of this new architecture. It’s composed of multiple streaming applications written in Scala using Kafka Streams. It is responsible for many things but mostly enriching the data in real-time, by joining analytical events with HTTP events coming directly from the ingestion layer.

- Schemapi Registry - The new component to publish and consume schemas. Schemas are created-validated-published via plugins on our CI pipeline. They are served by an HTTP service or from a Kafka topic to get updates in real-time.

- HTTP Gateway - Another source of data to enrich our events. For every HTTP request we get on our services, we publish an event to our HTTP Events topic. The data is HTTP headers which contain platform/device information and other bunch of important data.

Strengths

- Richer events with better data quality. Having an additional layer for enrichment and a new source greatly improves our data quality.

- Analytics Enrichment Pipeline takes the burden of enrichment from the producers and Collector, and does it in real-time.

- Human readable JSON payloads between the components. It makes it much easier to reason with the pipeline and improve developer efficiency.

- Independent producer teams and better ownership. We bring producer teams tooling to create and monitor events and they own the events they create. They are responsible for making sure they are producing good quality data.

- Better monitoring because of ownership. Now we can produce metrics regarding the events data, and teams are responsible for creating alarms on top of these metrics.

- Strict schema enforcement. Using OpenAPI for schemas gives us much better enforcement on the data, both syntactically and semantically.

Weaknesses

- Higher complexity and more components to maintain. While this architecture is technically superior to its predecessor, it brings additional complexity. There are more surface areas for errors and chances for something to break.

- More tooling is required to enable people. While giving producers ownership and efficiency is great, it comes with a cost of creating and maintaining additional tools for them.

- Only 99% guarantee on the enrichment ratio. Because of doing the enrichment in real-time, we have to have a window (ten seconds) to do the enrichment. If the corresponding HTTP event doesn’t show up during that window, the event has to go through without being enriched.

Migration Challenges

Although this undertaking has been technically very ambitious, I personally have been most challenged by the non-technical side of the project. As discussed, the migration was a year-long effort requiring close cross-team collaboration. There were a lot of people involved and it was challenging to make sure everyone was kept happy.

Having stakeholders at either end of the pipeline brought great challenges for us. On one side we have producers who own all the services we have on various different teams. They are the ones who are defining their events, creating the schemas, and publishing the events to our pipeline. On the other side, we have consumers who use the events data to build data products, dashboards, reports, and so on. Being just one team with limited resources made it hard to provide balanced care for both sides.

We spent the majority of our time working with producers during the migration. The project was designed in a way to require little to no change on the final data, so the consumers wouldn’t have to do a huge migration. That meant the workload had to shift to our side and also the producers. Because of the size of the pipeline and the distributed nature of the migration, we couldn’t just switch from v1 to v2 on a single day and call it done. This was probably the biggest challenge of this endeavor: imagine a year-long migration with periodic updates from the old pipeline to the new one, in which people are supposed to follow the updates and keep up with the migration.

While we aimed for consumers to have no changes, they were ultimately inevitable. During the migration we kept the v1 version fully functional, while switching events to the new pipeline one-by-one over the year. We had quality checks and data validations to make sure the consumers would not be hit, but sometimes changes were required. Although these changes were very simple, consumers would still have to do investigations on their side to figure out which parts are affected, identify all the projects, and track the migration. The challenging part was to provide them a way to do this investigation just once and be done with it.

Some key learnings from these challenges:

- Communication is a must. Whenever you reach out to people, make sure your message went across. Sometimes over-communicating could be a better choice. Just sending an email is not enough for the majority of cases. Do follow-up meetings or have key people on each team that you reach out to.

- Bring clear guidelines and documentation specific to your stakeholders. Make sure it’s up to date and it covers all the details people should know about. Have a few simple guides with links to give more detail on the more intricate parts.

- Automate warts of the pipeline and bring tooling instead of more documentation. You cannot rely on people following the docs with no errors, making attaching these tools to their CI/CD pipelines a great choice.

- Be flexible when it comes to adapting change. Your plans will usually not align with other teams’ plans. Make sure there is always a buffer on your plans: give people more time than you think they will need.

- Find a good balance between being strict with your new pipeline rules and allowing people to adapt. Sometimes you might have to compromise on your rules for people to complete their migration without hassle. Once you move everyone to the new system, then you can slowly work on closing the gap on your ideal situation.

- Expect to give a lot more dedicated support for your stakeholders. You cannot just rely on giving people guides and expect them to complete their migration. Rather, you will have to have meetings with them to explain the process and show how it should be done. In some cases, you might have to even do it for them.

- Prioritizing between project/stakeholder management and technical implementation is very hard. Balance your own time by dedicating time slots for both parts, either on your daily schedule or on your sprint for the next week. Another tip is make use of additional tools like notes or calendars.

The Next Steps

No software project is ever completely finished, and it’s the same for our pipeline. Now we have the base of the platform down, we can build additional niceties on top. These could include:

- Improve toolings we provide to work with events data. Mostly on the producers’ side, our OpenAPI toolings could use some better user experience. We need stricter validations on publishing schemas to reduce problems caused by user error.

- Provide clear documentation and guidelines for both sides of the pipeline. Producers should have an easy-to-follow guide to learn how to create events. And consumers should know the journey of events and how to work with them.

- Provide data catalog and improve data discoverability. This is a missing link for us at the moment which I will personally be tackling in the very close future. (So watch this space for another blog post!)

- Provide better monitoring and visibility to keep the data quality at best. From time to time, things might go wrong and need investigation which is time consuming. We should have automated processes that do quality checks for us and have better visibility on the pipeline to follow the data journey.

Today we have a much better system that is stable, has low latency, and with much better data quality. While it was very challenging to run a long migration with so many people involved, it was very rewarding. We are ready for hundreds more events and thousands more users going through our platforms.

The switch meant there would be purpose-specific applications trying to produce events only with the data available in their context. As those applications cannot have the whole context of an event that we need, there needed to be an additional component dedicated to enrich the data. For this reason, we started architecting the new system.