How Sequential Testing Accelerates Our Experimentation Velocity

Discover how GetYourGuide's Senior Product Analyst, Konrad Richter, revolutionizes A/B testing with sequential testing—a smarter, faster approach that cuts down on experiment runtimes and boosts product development. Dive into this game-changing strategy and learn four key insights from their journey to more efficient experimentation.

Key takeaways:

Konrad Richter, Senior Product Analyst at GetYourGuide in Berlin, shares how a new approach to A/B testing reduces the average runtime of experiments, accelerating product improvements at GetYourGuide.

{{divider}}

Ever felt like your product experiments are taking forever to yield actionable insights? You're not alone. Traditional A/B testing methods require a fixed sample size and runtime, making them rigid and difficult to adapt to real-time data. But what if there's a smarter, faster way to experiment and iterate? That's why we switched to sequential testing at GetYourGuide, and the results have been transformative. In this post, I’ll share my four most important learnings from this journey. But first, a bit about sequential testing.

Sequential testing, an approach developed by Abraham Wald during World War II, addresses a critical issue in randomized controlled trials (RCTs) known as the ‘peeking problem.’

This problem arises when experimenters frequently check intermediate results with the intent of prematurely stopping the test, a practice that inadvertently inflates the false-positive error rate due to repeated significance checks.

While effective, traditional fixed-sample size A/B tests (FSTs) necessitate a pre-determined sample size calculated by pre-experiment power analysis, making it challenging to adapt to real-time insights or unforeseen circumstances.

Sequential testing offers a solution by allowing repeated significance checks throughout the experiment. This methodology not only aims to reduce the average runtime of experiments by permitting an early stop if results are decisive but also prevents error inflation.

Finding The Right Approach

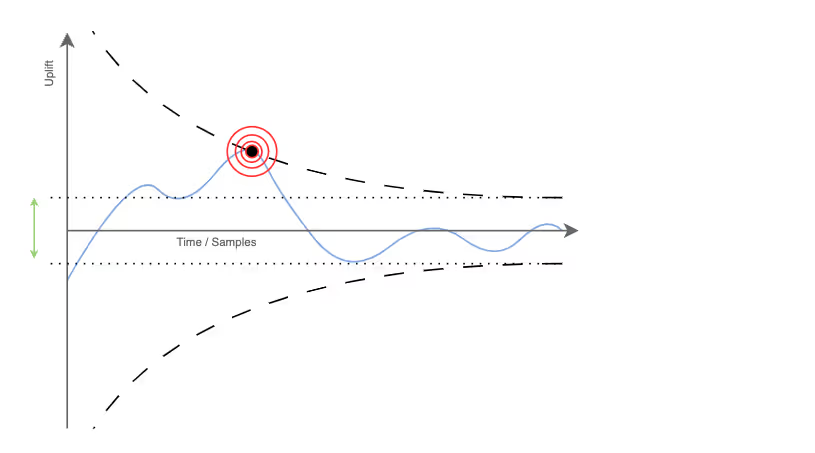

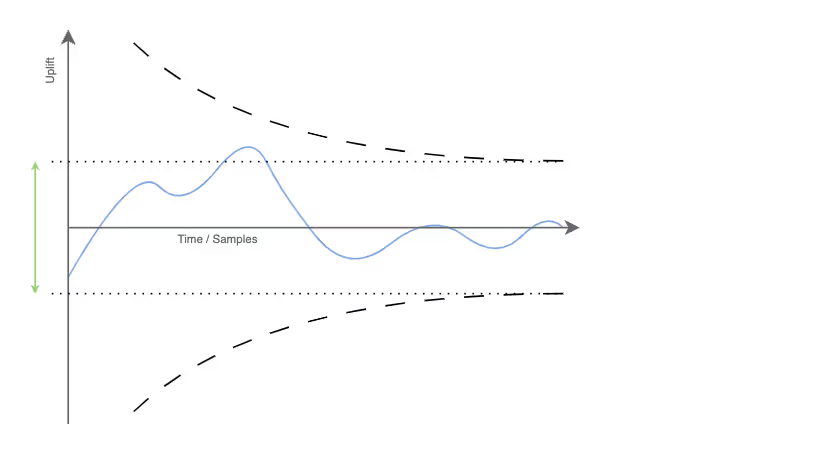

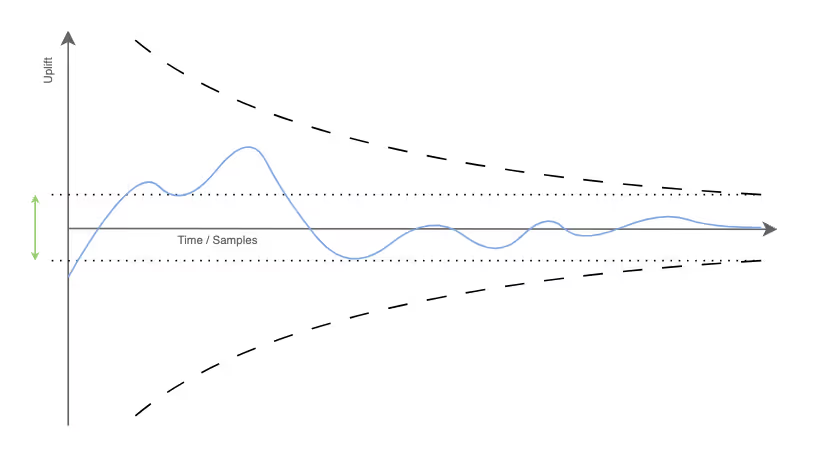

Sequential tests have lower statistical power (the likelihood that an experiment detects an actual effect when it exists) than FSTs because they require wider confidence intervals (decision boundaries) to control the overall type I error probability during continuous significance checks.

Therefore, sequential tests need a higher maximum sample size to maintain the same type I error rate and power as an FST. The following three graphs provide a graphical intuition for the relationship between type I error rate, power, and sample size:

Various approaches have emerged to run sequential analyses under error control, providing different solutions to balance the trade-offs between type I error probability, power, and maximum sample size.

The most prominently used ones are group sequential tests (GST) and always valid inference (e.g., mixture sequential probability ratio test and generalization of always valid inference). The Spotify blog post “Choosing a Sequential Testing Framework — Comparisons and Discussions” by Mårten Schultzberg and Sebastian Ankargren provides an excellent overview.

Our simulations validated this post's findings, highlighting GST as the most promising approach for us because our platform processes new experiment data in batches (daily). Always valid inference methods are better suited when analyzing each new observation (streaming); otherwise, their power is comparably low.

Break-Event Success Rate

With the findings of our simulations, we retrospectively applied GST to our historical experiments. We found that we could have achieved an average runtime reduction of ~23% (vs. FSTs) on experiments that eventually showed significant results. Still, to achieve the same statistical power, we had to increase the maximum sample size on average by ~6% (i.e., flat experiments ran ~6% longer).

Under these conditions, we would only have a net positive runtime reduction if more than 21% of experiments are successful. This high “break-even success rate” motivated us to take a different approach to sequential testing.

Early Termination of Unpromising Tests

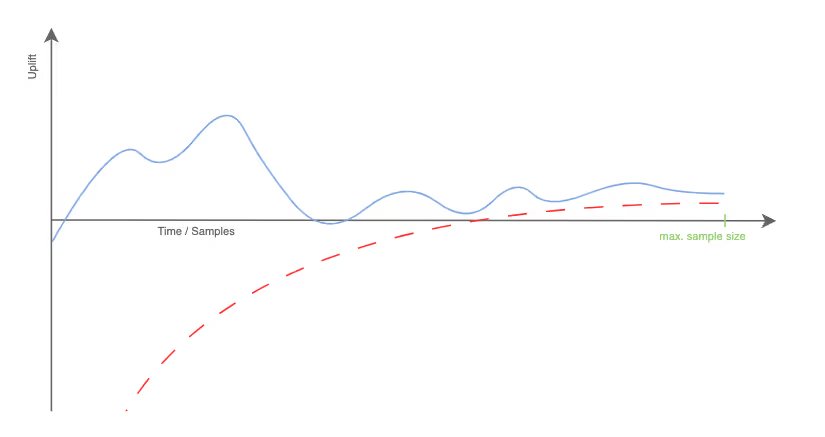

Many sequential testing implementations focus on concluding successful experiments early. Considering the rarity of successful experiments, we emphasize early termination of those that aren't promising. Unpromising experiments are those unlikely to reach the pre-defined minimum detectable effect (MDE) by the end of a pre-defined runtime based on intermediate results. Instead of using two (efficacy & futility) boundaries, we employ just a futility boundary. Thus, success is determined if an experiment concludes without crossing this boundary.

An experiment where the uplift crosses the futility boundary before reaching the maximum sample size can be stopped early and concluded to be not significantly positive with regard to the pre-defined MDE.

Simulations confirmed that our approach guarantees the defined type I and II error rates. Furthermore, we see an average runtime reduction across all our experiments of 36% compared to a two-sided FST setup.

Implementation

Calculations of the maximum sample size and the futility boundary are motivated by interpreting experiment results as a random walk and based on known Brownian motion characteristics. We call this innovative approach BaseMATH [preprint].

Stay tuned to our InsideGetYourGuide blog for the mathematical details and how BaseMATH overcomes the major challenge of repeated numerical integration in GSTs.

Although the BaseMATH approach simplifies the implementation of Sequential Testing compared to GST, we had to tackle a major reconstruction of our experimentation analysis platform first.

The problem we faced was that all statistical implementations, like significance tests or sample ratio mismatch tests, had been implemented in Looker. Implementing BaseMATH in Looker was impossible. Therefore, we decided to create a Python library containing all statistical implementations and a daily batch process to handle all data aggregations and calculations.

This reconstruction of our experiment analysis platform allowed us to integrate BaseMATH and to use Looker only as a visual interface for the pre-calculated data.

Roll-Out Process

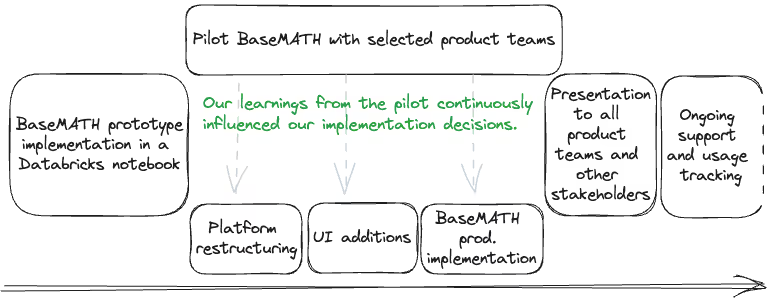

Our roll-out of the new sequential testing approach was methodically phased to ensure a seamless adoption process within the company. The journey began with phase 1, a pilot and validation stage, where we engaged selected product teams as early adopters of the BaseMATH methodology.

We provided these teams with a dashboard within Databricks, creating a practical environment for them to trial and interact with the new method. This phase was crucial for confirming our simulations and backtesting outcomes, understanding business stakeholders' specific needs and potential obstacles, and fostering a company-wide atmosphere of curiosity and acceptance for the new approach.

In phase 2, even as the pilot was underway, we initiated a comprehensive restructuring of our experimentation analysis platform to accommodate BaseMATH. This step included refining the user interface to facilitate a more straightforward experiment setup and more insightful post-analysis, setting the stage for broader adoption in the next phase.

With the groundwork laid, phase 3 centered on formally presenting the BaseMATH methodology to all stakeholders. We moved beyond the conventional Databricks notebook, positioning BaseMATH as a new standard within our organizational practices.

During this briefing, we highlighted the advantages of BaseMATH over traditional Fixed Sample Testing (FST) and discussed this innovative approach's operational nuances and limitations. To ensure the methodology's integration was as smooth as possible, our Analytics team established support channels, monitored the usage and effectiveness of BaseMATH, and committed to regular evaluations of stakeholder satisfaction, fostering continuous improvement and responsiveness.

Phase 4 underscores the importance of maintaining a collaborative approach post-rollout. We learned that continuous engagement with experimenters across the company was vital. It's not just about guiding teams through the initial rollout but actively engaging with them to optimize the methodologies' use. This strategy accelerates our experimentation velocity, reinforcing our dedication to innovation and efficiency.

Conclusions

As we wrap up our exploration of sequential testing and its implications for experimentation at GetYourGuide, we've taken a moment to reflect on what we've learned and where we go from here. In this section, you'll find a snapshot of our journey, the invaluable lessons we've gathered along the way, and some practical steps for those keen to enhance their experimentation strategies.

Summary

In this post, we've explored the potential of sequential testing in accelerating our experimentation processes at GetYourGuide:

- Sequential Testing vs. Fixed Sample Testing: We delved into the advantages of sequential testing, such as reducing the average runtime of experiments and tackling the 'peeking problem' in traditional A/B testing.

- Finding the Right Approach: Our simulations identified Group Sequential Tests (GST) as the most promising methodology for our specific needs. Focusing on the early stopping of unpromising experiments is most impactful.

- Implementation and Rollout: We shared our journey from reconstructing our analytics platform to the phased rollout of our BaseMATH methodology.

Learnings

- A minority of experiments yield positive results. Consequently, stopping unpromising experiments as early as possible is ideal for average runtime improvements.

- New methods are only impactful if experimenters adopt them.

- Piloting a new methodology with a small group of stakeholders is an excellent approach to understanding user needs.

- Experimentation is not intuitive; therefore, it requires extensive support.

Actionable Steps

- Evaluate Your Current A/B Testing: If you're using traditional Fixed Sample Testing, consider the limitations and whether sequential testing could offer benefits.

- Understand Your Data Flow: Sequential testing methods vary based on how your platform processes data, so choose accordingly.

- Start Small: Pilot the methodology with a small group of stakeholders before a full-scale rollout.

- Monitor and Iterate: Once implemented, keep tabs on performance metrics and be prepared to adapt and improve.

Special thanks to Alexander Weiss, Prateek Keshari, and Valentine Mucke for reviewing the article.

.JPG)