How to Empower Engineers with Infrastructure as Code

Dang Nguyen is an Engineering Manager on the Data Infrastructure team. He explains how a combination of Infrastructure as Code (IaC) and self-service capabilities can go a long way to make it easy, efficient, and safe for engineers to own their data systems.

Key takeaways:

Dang Nguyen is an Engineering Manager on the Data Infrastructure team. He explains how a combination of Infrastructure as Code (IaC) and self-service capabilities can go a long way to make it easy, efficient, and safe for engineers to own their data systems.

{{divider}}

One of the Data Infrastructure team's missions is to act as 'engineers for the engineers,' to help others understand and leverage the architecture and platform underlying their features. We support teams with their various use-cases which involve data systems. One of the most common support requests we used to receive was to set up a new database.

All Roads Start from Terraform

When a microservice is spun up, a new database is often required as well. Teams would create a JIRA ticket for us. We would in turn place a copy of our well-crafted Terraform module into the microservice’s repo, modify the relevant Terraform variables (e.g., number of instances, instance type, engine), terraform plan, terraform apply, then voilà:, the database would be ready and our engineers finally unblocked after only… one week. Let’s call this vicious cycle 'Terraforming in one week' – we will revisit it later.

We used the same approach for all of our databases, meaning that for 50 databases we had in production, there were 50 copies of the Terraform module laying in 50 different repos. One day, we decided to enable binlog replication for our databases, which would require changing the Terraform module. The novice approach would be to copy the same change to 50 Terraform modules laying around in 50 different repos, terraform plan 50 times, terraform apply 50 times.

Luckily we did not actually have to do this manually, thanks to the awesome tool auto-pr created by GetYourGuide’s Developer Enablement team. Nevertheless, you get the idea: it is very cumbersome to roll-out infrastructure changes across multiple Terraform stacks of the same resource. If only there were a way to centralize all of the Terraform stacks…

Atlantis to the Rescue?

Atlantis is not a replacement for Terraform, but rather an add-on. Atlantis features automation, standardization, and collaboration for Terraform. With automation and standardization, our aforementioned issue with manually updating 50 different Terraform stacks was resolved. On top of that, the collaboration feature meant that we would be able to enable our developers to independently make changes to their own databases or even set up new ones without having to wait for a week.

Unfortunately in our case, getting collaboration to work in a secured and user-friendly way was easier said than done. In order to provide some sort of role-based access control (RBAC) to make sure one does not interfere with others’ resources, we would need to tag our resources properly. If that was not challenging enough, Terraform syntax was not the most straightforward language from the developer’s point of view.

Although Atlantis did not work out for us, the idea of a centralized Infrastructure as Code (IaC) service featuring automation, standardization, and collaboration was here to stay, and would be embraced fully in our next solution.

Datastores Centralized Service (DCS)

Once we realized that there was no single existing solution that could help us check off all the boxes, we explored the path of developing our own in-house solution which would firstly bring together different existing components (so as to not reinvent the wheel); and second, bridge the business gap between these components.

In term of architecture, we take a MVC-like design where the three pillars are:

- UI (as V)

- REST API (as C)

- Pulumi (as M)

UI

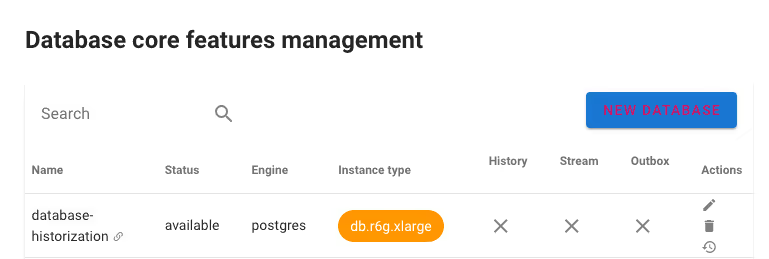

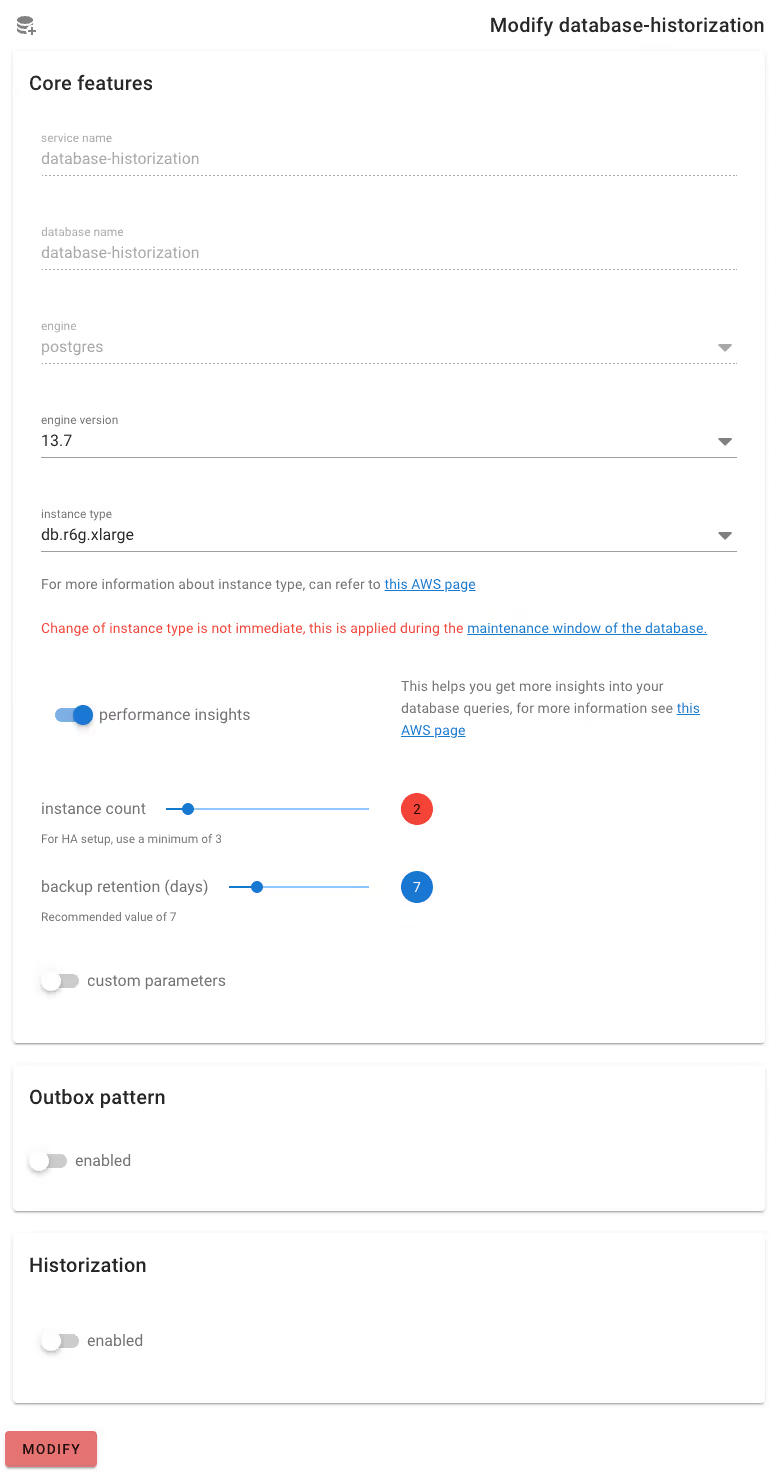

Serving as the one entry point for our users, the UI component is developed in Vue.js. It provides users with an overview of their datastores and the ability to modify them with just a few clicks. Below are examples of a database management view:

Besides being intuitive, one of the advantages of the single centralized UI is that it allows us to provide some best-practice configuration and guardrail against potentially dangerous changes. For example, to avoid accidental deletion of a database, the deletion request requires explicit confirmation from the admins before it can proceed.

As with the standard MVC design, requests made by users through the UI are dispatched to the Controller - our REST API component.

REST API

As the name suggests, our REST API component provides the REST endpoints for the UI component. However, it does even more than that: it also handles the business gap and helps glue all the components together. It achieves this by acting as a finite automata.

Continuing our previous example, once a request to create a new database reaches the REST API component, a series of asynchronous idempotent tasks are performed, such as:

- Pre-condition validation

- Superuser and admin credential creation in AWS Secret Manager

- AWS Aurora database cluster creation

- Administrative operations on newly created database

- Other tasks to enable special features, such as historization and outbox

The nature of these tasks vary. Tasks one and two may interact with external AWS API. Task three does the actual database provisioning via the Pulumi component (see below). Task four executes SQL queries against the database. Task five may interact with internal API. The task’s flexibility allows us to extend our capabilities to as many systems as necessary in order to fulfill a user’s request.

Pulumi

Finally, the component to do the job of Terraform is Pulumi. Essentially, with Pulumi you can write IaC in modern programming languages (e.g., Python, Typescript) instead of Terraform’s HCL. We are especially interested in their Automation API feature which enables us to get rid of the whole 'Terraforming in one week' cycle.

Another reason why we prefer Pulumi over other IaC solutions (such as CloudFormation) is its similarity to Terraform. In fact, it is so similar that one can do an almost perfect 1-to-1 translation from Terraform to Pulumi out of the box. That helps speed up the migration effort as we already have 50 Terraform stacks for our 50 databases.

Pulumi also makes standardization easier. Changes are made to the one centralized module, which then propagates to all the stacks of that module. We no longer have to worry about some forgotten stacks with inconsistent module templates.

Final Thoughts

There you have the three pillars of Datastores Centralized Service (DCS), our in-house self-service solution for empowering our engineers with IaC. Although we have only mentioned databases (which include both MySQL and PostgreSQL) in this article, we are currently also supporting Redis cache, Kafka, and Credential as well.

Needless to say, since the rollout of DCS, the number of support tickets to provision resources has gone down significantly (and have been replaced by feature requests instead). Teams are much happier when they can roll out a new database – and with that, a new service – at a moment's notice instead of being blocked for a week.

.JPG)