Running and Scaling a Synthetic AB Testing Platform for Marketing

To test the impact of marketing actions, Valentin created a synthetic A/B testing methodology without using visitors. He explains the methods he used to create and run them in a controlled environment. True to his team’s mission, the senior data analyst also shares how he created a self-service testing product that enabled the Marketing team to run these tests independently.

Key takeaways:

Valentin Mucke is a senior data analyst for the Marketing Analytics team. His team’s mission is to use analytics to improve prioritization and efficiency in company-wide marketing efforts. The team’s responsibilities include creating marketing dashboards, helping marketing teams with analytics needs, and creating forecasts.

{{divider}}

To test the impact of marketing actions, Valentin created a synthetic A/B testing methodology without using visitors. He explains the methods he used to create and run them in a controlled environment. True to his team’s mission, the senior data analyst also shares how he created a self-service testing product that enabled the Marketing team to run these tests independently.

Consider the following situation: You’re an analyst and someone in the Marketing team comes to see you with a problem. They want to understand if the display ads they’ve been running on specific websites are cannibalizing traffic they could have gotten for free.

If you work at a company that measures their marketing actions, you’ll hear this type of question nearly every day.

Building a piece of code to measure this use case is not always straightforward and then building it again for a similar use case a few weeks later can be frustrating. This also creates a bottleneck for the company as it limits how much measurement can be run to assess the impact of marketing actions. We faced this challenge and built a solution: a product that automates these marketing use cases — a self-service platform for running marketing measurements.

I’ll share how the Marketing Analytics team built this product for the Marketing team, as well as how to set up a controlled experiment in marketing. Here’s an overview of the topic areas we’ll cover:

- Challenges of A/B testing in marketing

- Running a non-visitor based measurement

- Sampling: creating multiple potential suitors

- Assessing similarity with correlation, distance or deviation

- Giving confidence by estimating the measurement power

- Bundling and building the test into a product for self-service

Challenges of A/B testing in marketing

It’s natural for many tech companies to measure all changes that are made in their product or platform. The same practice should be applied to marketing. To measure the change, you might have to answer questions like:

- Which page template is the best one to rank in Google search?

- Did running this ad increase my overall revenue?

- Do images in search ads increase my CTR?

But, while measuring the impact of a product change can be straight forward, it can be quite a challenge in marketing. Most product testing uses visitor A/B testing. The change is implemented only for a segment of the population (A) and not for the other one (B). This is done randomly. The performance of the two groups is then compared to understand the impact. Unfortunately, when it comes to ads, we don’t necessarily have control over which user will see or not see our ad.

When running an ad in paid search, Google decides whether a person will or will not see the ad. We do not have any control over that, nor do we have any information on who the non-targeted users were. The same concept applies to display banners.

If we want to test two different templates of a page in SEO, we cannot simultaneously run a visitor A/B test. The ambiguity is due to how Google indexes pages. The Google bot crawls and indexes pages, so you can’t give two different versions of one page.

You may also be interested: What is a good F1 score?

Running a non-visitor based measurement

Introduction to market level measurement

To measure the impact of our marketing actions, we chose a technique known as controlled before and after testing.

Let’s take a step back. Imagine you could duplicate our universe. In one universe you run an action, in the other you do nothing — then compare both worlds. That would be the best way to understand and measure the impact of your marketing. We can agree that it’s not realistic, but we can try to estimate it. It’s possible to find units, areas, and accounts that when looked at together, have the same behavior, same pattern, and same profile.

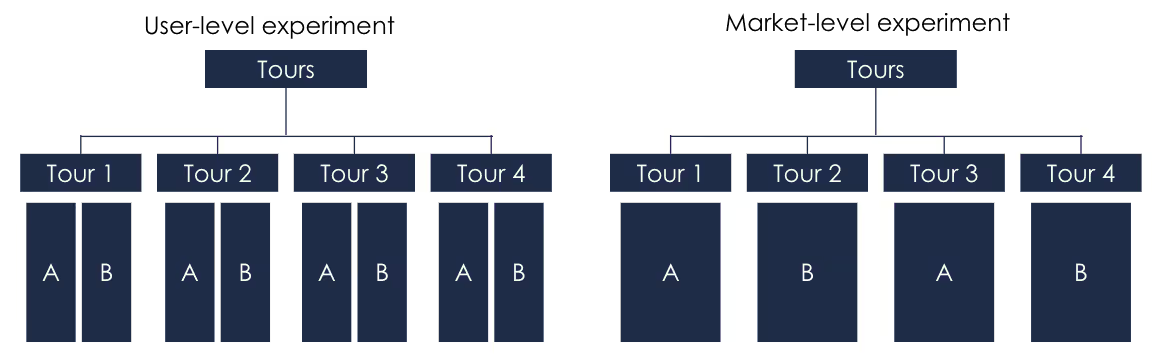

In a visitor based A/B test (user-level experiment), we would show one version to visitors A and another to visitors B.

In our case (market-level experiment) we will make changes in some units that will be group A and not in some other for group B. We will then compare the performance of the two groups.

A toy example:

In mathematics, a toy model is a simple case of a difficult problem. We'll use the following one to explain market-level experiment:

Let's say we’re running marketing campaigns in France, UK, Germany, and Poland. We will then create:

- A control group (A) is composed of countries where no marketing actions will be performed, we are expecting no change in the performance of these countries. Let's say France and the UK.

- A test group (B) is composed of countries where we will have a marketing event and expect to see an increase in revenue. In our case, Poland and Germany.

We will then run our marketing in Poland and Germany (test group). To measure the results of the experiment we will do the following :

- The first test of the measurement is to predict the performance typically expected for this group. The performance that we would expect without making any changes, we will call it the baseline. To predict the baseline we use a prediction algorithm using:

- Past data from the test group

- The control group data as a predictor.

- The prediction model is trained using the two features above and will predict the performance of the test group.

- The second step is then to compare the predicted baseline to the actual performance of the test group during the experiment. This is the difference that is going to be the effect of the marketing action.

What is key in this methodology is the concept of test and control group. The most important part is to find a control group that is similar to the test group so the model can accurately predict the baseline performance to compare against.

Without two groups behaving the same way, we will not be able to measure accurately. The next part will detail how we did that.

Sampling: creating multiple potential suitors

In the previous example, the choice of Poland and Germany as a control group was arbitrary, choosing a good control group in real life might be a bit more tricky. It is very unlikely that we would get a good match on the first try and we might be better off if we tried a couple of different groupings.

The first step is then to create several random aggregations of units.

Assessing similarity with correlation, distance, and deviation

Great, we now have over a hundred different aggregations but we just need one! The key in this part is now to be able to determine which groups are the most similar.

We have been asking ourselves the following question for quite some time: What does it mean technically to have two similar time series?

There is no magical answer, algorithm, or function that will say: “These two series are similar at 98.5%.”

There is a lot of research on this subject and multiple different methods can be applied. We decided to come up with a score created from the following calculations:

- Correlation — are the trends similar?

- Distance — are the times series close?

- Deviation — are the time series moving with the same magnitude?

Let’s take a closer look.

Similar trends with correlation

The first way to assess if two time-series follow the same pattern is to assess the similarity of their trends. When one time series is increasing, is the other one increasing with the same magnitude?

The approach used here, among one of the most common, is the Pearson correlation coefficient. This will assess the degree to which the two time-series move in relation together.

Distance:

Having two times series move together is one thing, but having them close is also a requirement. The closer the two times series are the more they should stay similar in the future. Having too much distance between two times series means that they may not react with the same magnitude to external effects.

To measure distance two main methods can be used:

Euclidean distance

Most common one but often also the most efficient. Compute the distance between two points, for every point of each time series. It will compute the distance between 1st of January 2021 observations for both test and control time series, then add the difference of 2nd of January and so on.

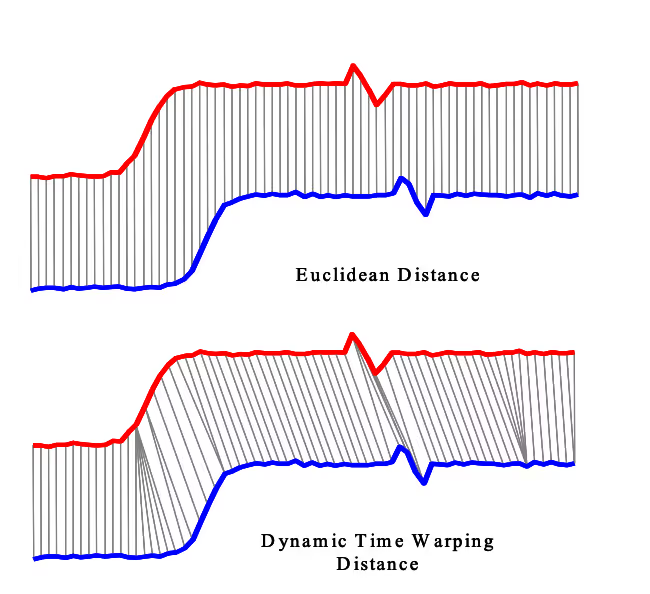

Dynamic time warping (DTW)

Compared to the Euclidean distance, in DTW the distance is computed against many points, this is called one-to-many matching. DTW will compute the distance between points 1 to 1, 1 to 2, 1 to 3…. This method can be useful when the two time-series have a similar trend but one is shifted in one direction. They should have the same prediction power but the euclidean distance could disregard them.

This is the case in the example below. These two graphs from research at the University of California shows two time-series with a similar trend but not aligned. In this case, Euclidean distance would compute a pessimistic dissimilarity measure.

Many other metrics can be used, like following the deviation of the time series against each other, assessing if they come from the same distribution with the Kolmogorov-Smirnov test, or several other methods.

Even with the metrics above, one question is still pending, which metric should I trust to make a decision?

Unfortunately, there is no easy answer. Highly correlated time series can still be slowly shifting away from each other, very close ones can be entangled.

Currently, we are using an empirical score that looks at the rank of each of the metrics (normalized) and recommends the best-positioned group.

A visual inspection of the proposed group is often recommended:

One direction of improvement could be to manually assess several groups to define if they should be selected or not. Then use an algorithm to learn the relationship between the metric above and the decision. This could give us a more precise indication of their weight.

Give confidence by estimating the measurement power

Now that we have found the best group among all those we had created, you could still ask the following question: “An effect of what magnitude will we be able to detect with confidence?”

In any test that you’re running, the confidence aspect is always important. How can you give confidence in the results you are going to provide?

Unlike an A/B test, there’s no simple calculation. But there’s still at least one technique that can help you gain more visibility.

The solution we have developed to answer this concern is simulation-based. The idea behind it is, like in a Monte Carlo simulation, applying various effects to several different test/control time series and measuring if we can detect them.

This will give us confidence in which level of uplift we should be able to detect in our experiment. Depending on the result we will assess if the scope is relevant for the outcome we are expecting.

You may also be interested in: Selecting and designing a fractional attribution model

Bundling it for self-service

In the previous sections, we reviewed all methods that are needed to set-up a control experiment.

As mentioned earlier, building all the necessary steps can take time and may require an analyst to do the job. We believe that this is a limiting factor, creating a bottleneck in the number of experiments that can be run and measured.

We built a product that would allow a marketing person to perform all the actions on itself and launch an experiment without difficulty in no time. The product is built with a combination of notebooks inside Databricks (a Data and Analytics platform) and automated visualization in Looker (Data visualization platform).

To do so we have created two main components:

- An analytics core, which creates the randomized groups, compute similarity and give a recommendation

- A product UI where the user can

- define the scope of its experiment

- get a group proposal

- lock the experiment

- automatically get the measured impact after the test run.

The analytics core

The analytics core is a bundle of the steps we detailed earlier. It will run in succession:

- Create the database within the scope of the experiment

- Randomly create several test/control potential groups

- Assess the similarity of each group

- Give a recommendation

- Run the measurement of the experiment at the end of the test period

The product

We wanted to create a self-service product for our internal team, therefore, it was key to develop a user-friendly interface. The product around the analytics core allows marketers to:

- Use the interface to select the scope in which they want to run their experiment. We are using a broad range of filters including:

- data source

- time period

- characteristics of the entities

- geographics restrictions

- Visually inspects the recommended groups for the experiment. This part is important to add confidence in the measurement and avoid a black-box effect.

- Track the performance during the experiment and access measurement results in the end.

Conclusion

In a data-driven company, this type of project is key to be able to make most of our decisions based on data and measurement. Having the will and resources to measure our actions is important, but having the capabilities to do it at scale and automated is even better.

If you are interested in joining our Marketing Analytics team, check out our open positions.