Using Deequ to Validate the Content of our Dataframes

The Marketing Tech Platform (MTP) team is responsible for managing the flow of data between GetYourGuide and our marketing partners.

Key takeaways:

Giorgos Ketsopoulos is a backend engineer at GetYourGuide. He explains how the Marketing Tech Platform team provides complete and accurate information to customers and internal data consumers using Deequ.

The Marketing Tech Platform (MTP) team is responsible for managing the flow of data between GetYourGuide and our marketing partners.

Data can flow from our marketing partners to GetYourGuide, such as our advertising campaign performance reports, or in the other direction. Marketing feeds, for example, are exported to our marketing partners who use their own algorithms to advertise GetYourGuide experiences across the web.

{{divider}}

Why do we validate our data?

One of the MTP team’s biggest responsibilities is to ensure the terabytes of data flowing through our pipelines remain correct and consistent. We use Apache Spark, Databricks, and Kafka (among other tools) to enrich our data with meaningful information before serving it to its final consumer(s).

Examples include joining performance metrics to specific advertising keywords to see how they perform, and retrieving additional information about our GetYourGuide activities (e.g. if they’re wheelchair accessible) before exporting them to our marketing partners.

Thanks to our data validation process, we’re certain our customers are seeing complete and accurate information, while our internal data consumers have all the information they need to drive our marketing and brand campaigns.

Testing data before implementing Deequ

We currently have multiple approaches to ensuring our data is in the correct form:

- We define Spark data set schemas

- We implement shape tests to ensure our data changes the way we think it will through our data pipelines

- We unit test all our data transformation functions

However, these approaches are mostly concerned with the form of the data rather than the data itself.

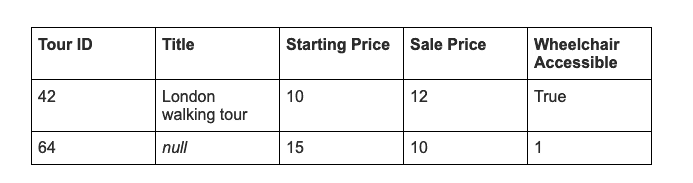

Consider the following fictitious data set as a simplified illustration of the data we provide to our marketing partners:

The following issues pop out:

- The sale price is higher than the starting price for tour 42

- Tour 64 has no title

- The wheelchair accessibility field for tour 64 is 1 instead of True

The data checks mentioned above would definitely miss issue one, and can potentially miss the other two. The end result would be incorrect data for our marketing partners, hurting our marketing growth, and our customers.

This is just one example of logical data constraints we would like to implement. But reality can be significantly more complex:

- What if we want to allow a very small number of our tours to not have a city (they are on a small island, for example)?

- The URL for the tour should follow a specific string pattern

- Ratings should be between 1 and 5

All these constraints require us to test the values of our data. Our original approach was to implement our own verification suite, but adding new types of constraints and optimising Spark UDFs for implementing checks can be tricky.

At one point, it started to feel like we were developing a whole library from scratch. We decided to check if someone else had already done this and found there are a few viable alternatives, the best-suited to our needs being Deequ.

{{divider}}

Adopting Deequ

Deequ is an open source library developed and used by Amazon to test data quality at scale. The library provides a range of features, including metric generation and anomaly detection, but the one we use at GetYourGuide is creating and validating data constraints.

As is often the case with the tools we use, our adoption of Deequ has been gradual. It piqued the interest of our engineers and we decided to test it during our regular Hack Days. We then used it on our marketing feeds, and it’s already in production for one of them.

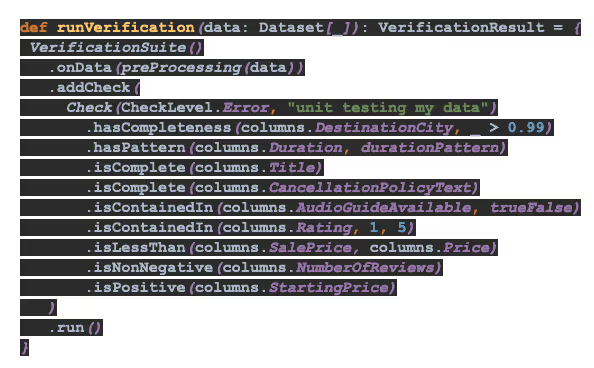

We create a set of constraints and compose them onto a VerificationSuite. Running the suite returns a VerificationResult, the status showing us which constraints passed. As the Deequ team puts it, “Instead of implementing checks and verification algorithms of your own, you can focus on describing how your data should look.”

If a constraint is violated, we throw a custom exception in the running job. No data is better than wrong data. The exception will create a monitoring alert, and a member of the team can fix the source of the inconsistency and restart the pipeline.

Challenges we faced using Deequ

No tool is perfect. Or at least the perfect tool is yet to be invented, which is good because I like my job. Our main issue with Deequ involves composing more complicated constraints.

Our tour duration column should follow a specific pattern, but can also be null. One of Deequ’s constraints cannot check this. In this specific example, we decided to add a pre-processing step to the validation, replacing null with 00:00:00 for the validation step.

Other composite constraints are very difficult to check. What if 80 percent of a column should adhere to one of our many conditions, while the remainder can be null? A constraint will have to be broken down to its composites in some form, which can be complicated, or in some cases impossible.

We could try extending Deequ ourselves, but that could be significant work. A lot of the classes can’t be easily extended and would require creative and complex workarounds such as implicit conversions.

Next steps

Despite the occasional issues with Deequ, it works very well for the overwhelming majority of our use cases, and we’re looking to expand its use in our codebase.

Our first goal is to extend the use of Deequ throughout our marketing feeds, leveraging its automatic constraint suggestion to make developers’ lives easier.

We also want to improve the metrics and monitoring around Deequ. Observability and accessibility are at the heart of our engineering principles, and our use of external tools is no exception. Deequ can provide a lot of metrics around the health of our data set, and we’re exploring ways to make the most of it during our regular Hack Days.

Finally, Deequ works well with another upcoming project: data lineage. When Deequ tells us some of our data looks wrong, an engineer digs into it and finds out where things went awry. Data lineage keeps track of the flow of data, making this process faster and better documented.

Conclusion

There are many approaches to validating data. Deequ has made it very easy to validate the content of our data sets here at GetYourGuide. We can sleep at night knowing our advertising campaigns are on point, and our colleagues see the latest, correct data.

But what about the rare occasion that one of our data constraints is violated? Our alerting system will kick in, we’ll grab a coffee, and implement a fix. All thanks to our engineers, our tools, and the wonderful open source ecosystem that Deequ is a part of.

If you’re interested in joining our engineering team, check out our open positions.