What’s a Good F1 Score?

Last year, I worked on a machine learning model that suggests whether our activities belong to a category like “family friendly” or “not family friendly”. Following our Data Science principles, I came up with a simple first version optimizing for its F1 score , the most-recommended quality measure for such a binary classification problem. You can see this for instance by looking at some of the top Google results for “F1 score” like “Accuracy, Precision, Recall or F1?” by Koo Ping Shun.

Key takeaways:

Dr. Ansgar Grüne holds a Ph.D. in Computer Science and is a senior data scientist for the Relevance and Recommendations team. He works on improving the ranking order of activities on our pages and has previously worked with GetYourGuide's Catalog team on categorizing our activities. Both topics include binary classification tasks, for example, to decide whether an activity belongs to a category like “family friendly” or not. In this blog post, he explains how to interpret the most popular quality metric for such tasks.

{{divider}}

Why does a good F1 score matter?

Last year, I worked on a machine learning model that suggests whether our activities belong to a category like “family friendly” or “not family friendly”. Following our Data Science principles, I came up with a simple first version optimizing for its F1 score , the most-recommended quality measure for such a binary classification problem. You can see this for instance by looking at some of the top Google results for “F1 score” like “Accuracy, Precision, Recall or F1?” by Koo Ping Shun. When I presented the results to my product team, they wondered, “How good is this achieved F1 score of 0.56?” I explained how the metric was defined which made the value more understandable. In addition, I had done the task on a small sample set myself and showed that the model also performed well compared to the human F1 score. However, I wondered if I could give an even more intuitive meaning to the F1 score.

Clearly, the higher the F1 score the better, with 0 being the worst possible and 1 being the best. Beyond this, most online sources don’t give you any idea of how to interpret a specific F1 score. Was my F1 score of 0.56 good or bad? It turns out that the answer depends on the specific prediction problem itself.

Today, I’ll explain how you can interpret a specific F1 score by comparing it to what is achievable without any knowledge. This interpretation uncovers some unfavorable aspects of the F1 score. I will therefore also mention an alternative metric in the end. But first, let’s quickly recap.

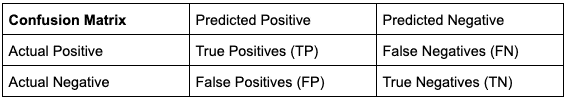

Recap: confusion matrix and classification quality

The confusion matrix divides up the results of a certain binary classification problem.

Accuracy tells us what proportion of the data points we predicted correctly, i.e. accuracy := (TP+TN) / (TP+FN+FP+TN). The biggest and most well known problem with accuracy is when you have imbalanced datasets. Say, 75% of GetYourGuide’s activities were family-friendly. Then, a model that predicts family-friendly for all activities will get an accuracy of 75%. Our bad quality classifier gets a seemingly very good quality score.

The standard answer to this problem is that you consider instead recall and precision. Recall is the share of the actual positive cases which we predict correctly, i.e. recall := TP / (TP+FN). In our toy example, let’s say that family-friendly is the positive class. Then, always predicting family-friendly results in an optimal recall of 100%. Always predicting not family-friendly results in 0% recall.

The classical counterpart to recall is precision. It is the share of the predicted positive cases which are correct, e.g. precision := TP / (TP+FP). In this case, always predicting the positive class (family-friendly) will result in the worst possible outcome. Predicting almost all cases as negative will let you reach a precision of 100% on the other hand. All you need to get a perfect precision is to correctly predict the positive class for one case where you are absolutely sure.

Hence, using a kind of mixture of precision and recall is a natural idea. The F1 score does this by calculating their harmonic mean, i.e. F1 := 2 / (1/precision + 1/recall). It reaches its optimum 1 only if precision and recall are both at 100%. And if one of them equals 0, then also F1 score has its worst value 0. If false positives and false negatives are not equally bad for the use case, Fᵦ is suggested, which is a generalization of F1 score.

If you want a detailed introduction to these metrics, check out this great post on Medium, Confusion Matrix, Accuracy, Precision, Recall, F1 Score - Binary Classification Metric.

What is a good F1 score?

In summary, the F1 score is a good choice for comparing different models predicting the same thing. Yet, how good is a given F1 score overall, say my model’s 0.56?

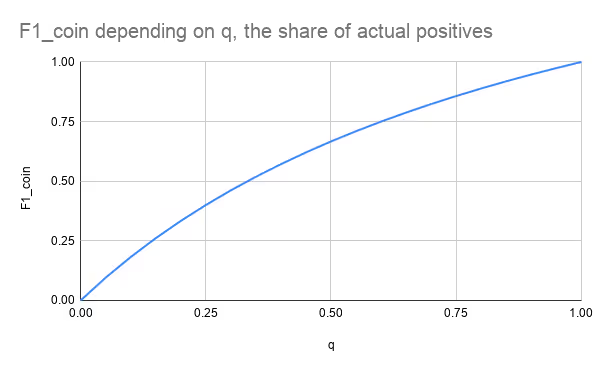

To answer this we look at the best score we can reach without any knowledge, say by flipping a coin. This coin can be unfair. Let p be the probability of the coin predicting a positive outcome, i.e. a perfectly fair coin would have p=0.5. Let q be the share of actual positive cases. In this scenario it is not difficult to derive from the definitions that precision = q and recall = p, see Appendix 1. Hence, precision is not influenced by the set up of our coin. And recall is best if the coin always predicts positive (p=1). Surprisingly, always predicting positive is the best we can do in terms of F1 score if we don’t have any information. It is due to F1 score being unsymmetric between positive and negative cases. It pays more attention to the positive cases. Our observations result in the maximum coin F1 score of ².

F1_coin = 2q / (q+1).

The following table and chart give an intuition on the optimal coin F1-score for differently balanced classification problems:

This shows that the F1 score depends heavily on how imbalanced our training dataset is. We can have an independent score by normalizing the F1 score. We could say that the coin should always result in a normalized F1 score of 0 and that the optimal score remains 1. This is achieved by the formula:

F1_norm := (F1-F1_coin)/(1-F1_coin)

My activity-category classification problem had only 1% actual positive cases, q=0.01. This results in F1_coin ≈ 0.02 and F1_norm ≈ 0.55. The prediction quality is roughly in the middle between the best guess without any knowledge and a perfect prediction.

Florian Wetschorek and his colleagues have recently used the same normalization approach in their interesting post RIP correlation. Introducing the Predictive Power Score. They use a slightly different baseline, predicting always the majority class, which however does not maximize F1 score as we have discussed above..

Beyond F1 Score

We have seen that the F1 score has two undesired characteristics: not being normalized and not being symmetric (when swapping positive and negative cases). There are other metrics like Matthew’s Correlation Coefficient (MCC) not having this problem. That one is explained and promoted nicely in Boaz Shmueli’s “Matthews Correlation Coefficient is The Best Classification Metric You’ve Never Heard Of “. A complete list and a more thorough investigation are outside of the scope of this blog post.

If you are interested in joining our engineering team, check out our open positions.

Appendix 1: Deriving the Formula for F1_coin

Remember that q is the share of actual positive cases and p is the probability that the coin predicts a positive outcome. Assume we draw randomly n cases, then the expected values are:

True Positives = TP = n*q*p

True Negatives = TN = n*(1-q)*(1-p)

False Positives = FP = n*(1-q)*p

False Negatives = FN = n*q*(1-p)

Hence:

Precision = TP / (TP+FP) = q*p / (q*p + (1-q)*p) = q

Recall = TP / (TP+FN) = q*p / (q*p + q*(1-p)) = p

F1 = 2 / (1/q + 1/p) = 2q*p / (q+p)

Looking at the first part of the equation above, the F1 score is clearly monotonously increasing in p. Hence, the maximum is reached for p=1 and it equals

F1_coin = 2 / (1+1/q) = 2q / (1+q)

Appendix 2: Unsymmetric Behavior of F1 and F1_norm

Let us look at this concrete example: TP = 40, FN = 40, TN = 16, FP = 4.

This means that q = (TP+FN)/(TP+TN+FP+FN) = 0.8 which implies

F1_coin = 2q/(q+1) = 1.6/1.8 ≈ 0.9.

From precision = TP/(TP+FP) = 40/44 ≈ 0.91 and recall = TP/(TP+FN) = 40/(40+40) = 0.5, we conclude F1 = 2 / (1/0.91 + 1/0.5) ≈ 0.65 and F1_norm ≈ (0.65-0.9)/(1-0.9) = -2.5.

If we swap the meanings of positive and negative, we get

TN = 40, FP = 40, TP = 16, FN = 4.

This means that p = (TP+FN)/(TP+TN+FP+FN) = 0.2 which implies

F1_coin = 2q/(q+1) = 0.4/1.2 ≈ 0.33.

From precision = TP/(TP+FP) = 16/56 ≈ 0.29 and recall = TP/(TP+FN) = 16/(16+4) = 0.8 we conclude F1 ≈ 2 / (1/0.8 + 1/0.29) ≈ 0.43 and F1_norm ≈ (0.43-0.33)/(1-0.33) = 0.15.

Both, F1 value and F1_norm value change a lot. They are very unsymmetric. In one direction, we predict better than the coin, while in the other direction we don’t. This could be a topic for a follow up in the future.

.JPG)